Ecosyste.ms: Awesome

An open API service indexing awesome lists of open source software.

https://github.com/dianna-ai/dianna

Deep Insight And Neural Network Analysis

https://github.com/dianna-ai/dianna

explainable-artificial-intelligence scientific-research

Last synced: about 1 month ago

JSON representation

Deep Insight And Neural Network Analysis

- Host: GitHub

- URL: https://github.com/dianna-ai/dianna

- Owner: dianna-ai

- License: apache-2.0

- Created: 2021-07-06T12:49:11.000Z (almost 3 years ago)

- Default Branch: main

- Last Pushed: 2024-05-22T12:53:06.000Z (about 1 month ago)

- Last Synced: 2024-05-22T13:58:37.111Z (about 1 month ago)

- Topics: explainable-artificial-intelligence, scientific-research

- Language: Jupyter Notebook

- Homepage: https://dianna.readthedocs.io

- Size: 269 MB

- Stars: 42

- Watchers: 5

- Forks: 12

- Open Issues: 104

-

Metadata Files:

- Readme: README.md

- Contributing: docs/CONTRIBUTING.md

- License: LICENSE

- Code of conduct: docs/CODE_OF_CONDUCT.md

- Citation: CITATION.cff

- Roadmap: docs/ROADMAP.md

Lists

- Awesome-explainable-AI - https://github.com/dianna-ai/dianna - ai/dianna?style=social) (Python Libraries(sort in alphabeta order) / Evaluation methods)

- awesome-machine-learning-interpretability - DIANNA - ai/dianna?style=social) | "DIANNA is a Python package that brings explainable AI (XAI) to your research project. It wraps carefully selected XAI methods in a simple, uniform interface. It's built by, with and for (academic) researchers and research software engineers working on machine learning projects.” | (Technical Resources / Open Source/Access Responsible AI Software Packages)

README

[](https://github.com/dianna-ai/dianna/actions/workflows/build.yml)

[](https://dianna.readthedocs.io/en/latest/?badge=latest)

[](https://sonarcloud.io/dashboard?id=dianna-ai_dianna)

[](https://bestpractices.coreinfrastructure.org/projects/5542)

[](https://fair-software.eu)

[](https://doi.org/10.21105/joss.04493)

# Deep Insight And Neural Network Analysis

DIANNA is a Python package that brings explainable AI (XAI) to your research project. It wraps carefully selected XAI methods in a simple, uniform interface.

It's built by, with and for (academic) researchers and research software engineers working on machine learning projects.

## Why DIANNA?

DIANNA software is addressing needs of both (X)AI researchers and mostly the various domains scientists who are using or will use AI models for their research without being experts in (X)AI. DIANNA is future-proof: one of the very few XAI library supporting the [Open Neural Network Exchange (ONNX)](https://onnx.ai/) format.

After studying the vast XAI landscape we have made choices in the parts of the [XAI Taxonomy](https://doi.org/10.3390/make3030032) on which methods, data modalities and problems types to focus. Our choices, based on the largest usage in scientific literature, are shown graphically in the XAI taxonomy below:

The key points of DIANNA:

* Provides an easy-to-use interface for non (X)AI experts

* Implements well-known XAI methods LIME, RISE and KernelSHAP, chosen by systematic and objective evaluation criteria

* Supports the de-facto standard of neural network models - ONNX

* Supports images, text, time series, and tabular data modalities, embeddings are currently being developed

* Comes with simple intuitive image, text, time series, and tabular benchmarks, so can help you with your XAI research

* Includes scientific use-cases tutorials

* Easily extendable to other XAI methods

For more information on the unique strengths of DIANNA with comparison to other tools, please see the [context landscape](https://dianna.readthedocs.io/en/latest/CONTEXT.html).

## Installation

[](https://pypi.python.org/project/dianna/)

[](https://pypi.python.org/project/dianna/)

DIANNA can be installed from PyPI using [pip](https://pip.pypa.io/en/stable/installation/) on any of the supported Python versions (see badge):

```console

python3 -m pip install dianna

```

To install the most recent development version directly from the GitHub repository run:

```console

python3 -m pip install git+https://github.com/dianna-ai/dianna.git

```

If you get an error related to OpenMP when importing dianna, have a look at [this issue](https://github.com/dianna-ai/dianna/issues/376) for possible workarounds.

### Pre-requisites only for Macbook Pro with M1 Pro chip users

- To install TensorFlow you can follow this [tutorial](https://betterdatascience.com/install-tensorflow-2-7-on-macbook-pro-m1-pro/).

- To install TensorFlow Addons you can follow these [steps](https://github.com/tensorflow/addons/pull/2504). For further reading see this [issue](https://github.com/tensorflow/addons/issues/2503). Note that this temporary solution works only for macOS versions >= 12.0. Note that this step may have changed already, see https://github.com/dianna-ai/dianna/issues/245.

- Before installing DIANNA, comment `tensorflow` requirement in `setup.cfg` file (tensorflow package for M1 is called `tensorflow-macos`).

## Getting started

You need:

- your trained ONNX model ([convert my pytorch/tensorflow/keras/scikit-learn model to ONNX](https://github.com/dianna-ai/dianna#onnx-models))

- 1 data item to be explained

You get:

- a relevance map overlayed over the data item

In the library's documentation, the general usage is explained in [How to use DIANNA](https://dianna.readthedocs.io/en/latest/usage.html)

### Demo movie

[](https://youtu.be/u9_c5DJewLU)

### Text example:

```python

model_path = 'your_model.onnx' # model trained on text

text = 'The movie started great but the ending is boring and unoriginal.'

```

Which of your model's classes do you want an explanation for?

```python

labels = [positive_class, negative_class]

```

Run using the XAI method of your choice, for example LIME:

```python

explanation = dianna.explain_text(model_path, text, 'LIME')

dianna.visualization.highlight_text(explanation[labels.index(positive_class)], text)

```

### Image example:

```python

model_path = 'your_model.onnx' # model trained on images

image = PIL.Image.open('your_image.jpeg')

```

Tell us what label refers to the channels, or colors, in the image.

```python

axis_labels = {0: 'channels'}

```

Which of your model's classes do you want an explanation for?

```python

labels = [class_a, class_b]

```

Run using the XAI method of your choice, for example RISE:

```python

explanation = dianna.explain_image(model_path, image, 'RISE', axis_labels=axis_labels, labels=labels)

dianna.visualization.plot_image(explanation[labels.index(class_a)], original_data=image)

```

### Time-series example:

```python

model_path = 'your_model.onnx' # model trained on images

timeseries_instance = pd.read_csv('your_data_instance.csv').astype(float)

num_features = len(timeseries_instance) # The number of features to include in the explanation.

num_samples = 500 # The number of samples to generate for the LIME explainer.

```

Which of your model's classes do you want an explanation for?

```python

class_names= [class_a, class_b] # String representation of the different classes of interest

labels = np.argsort(class_names) # Numerical representation of the different classes of interest for the model

```

Run using the XAI method of your choice, for example LIME with the following additional arguments:

```python

explanation = dianna.explain_timeseries(model_path, timeseries_data=timeseries_instance , method='LIME',

labels=labels, class_names=class_names, num_features=num_features,

num_samples=num_samples, distance_method='cosine')

```

For visualization of the heatmap please refer to the [tutorial](https://github.com/dianna-ai/dianna/blob/main/tutorials/explainers/LIME/lime_timeseries_coffee.ipynb)

### Tabular example:

```python

model_path = 'your_model.onnx' # model trained on tabular data

tabular_instance = pd.read_csv('your_data_instance.csv')

```

Run using the XAI method of your choice. Note that you need to specify the mode, either regression or classification. This case, for instance a regression task using KernelSHAP with the following additional arguments:

```python

explanation = dianna.explain_tabular(run_model, input_tabular=data_instance, method='kernelshap',

mode ='regression', training_data = X_train,

training_data_kmeans = 5, feature_names=input_features.columns)

plot_tabular(explanation, X_test.columns, num_features=10) # display 10 most salient features

```

### IMPORTANT: Sensitivity to hyperparameters

The XAI methods (explainers) are sensitive to the choice of their hyperparameters! In this [work](https://staff.fnwi.uva.nl/a.s.z.belloum/MSctheses/MScthesis_Willem_van_der_Spec.pdf), this sensitivity to hyperparameters is researched and useful conclusions are drawn.

The default hyperparameters used in DIANNA for each explainer as well as the values for our tutorial examples are given in the Tutorials [README](./tutorials/README.md#important-hyperparameters).

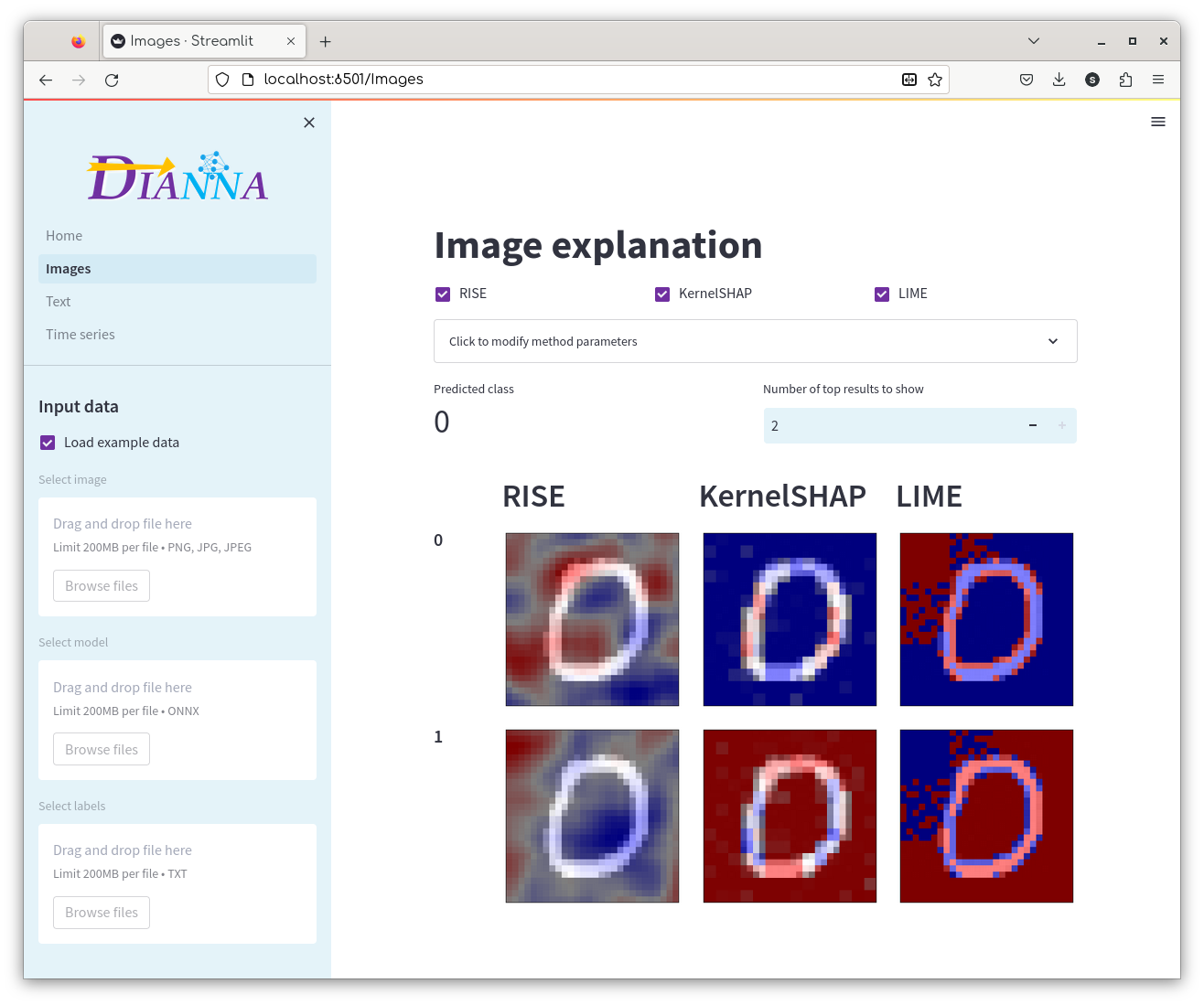

## Dashboard

Explore the explanations of your trained model using the DIANNA dashboard (for now images, text and time series classification is supported).

[Click here](https://github.com/dianna-ai/dianna/tree/main/dianna/dashboard) for more information.

## Datasets

DIANNA comes with simple datasets. Their main goal is to provide intuitive insight into the working of the XAI methods. They can be used as benchmarks for evaluation and comparison of existing and new XAI methods.

### Images

| Dataset | Description | Examples | Generation |

| :------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ | :-------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | :----------------------------------------------------------------------------------------------------------------------------------------------------- | :----------------------------------------------------------------------------------------------------------------------------------------------------- |

| Binary MNIST  | Greyscale images of the digits "1" and "0" - a 2-class subset from the famous[MNIST dataset](http://yann.lecun.com/exdb/mnist/) for handwritten digit classification. |

| Greyscale images of the digits "1" and "0" - a 2-class subset from the famous[MNIST dataset](http://yann.lecun.com/exdb/mnist/) for handwritten digit classification. |  | [Binary MNIST dataset generation](https://github.com/dianna-ai/dianna-exploration/tree/main/example_data/dataset_preparation/MNIST) |

| [Binary MNIST dataset generation](https://github.com/dianna-ai/dianna-exploration/tree/main/example_data/dataset_preparation/MNIST) |

| [Simple Geometric (circles and triangles)](https://doi.org/10.5281/zenodo.5012824)  | Images of circles and triangles for 2-class geometric shape classificaiton. The shapes of varying size and orientation and the background have varying uniform gray levels. |

| Images of circles and triangles for 2-class geometric shape classificaiton. The shapes of varying size and orientation and the background have varying uniform gray levels. |  | [Simple geometric shapes dataset generation](https://github.com/dianna-ai/dianna-exploration/tree/main/example_data/dataset_preparation/geometric_shapes) |

| [Simple geometric shapes dataset generation](https://github.com/dianna-ai/dianna-exploration/tree/main/example_data/dataset_preparation/geometric_shapes) |

| [Simple Scientific (LeafSnap30)](https://zenodo.org/record/5061353/)  | Color images of tree leaves - a 30-class post-processed subset from the LeafSnap dataset for automatic identification of North American tree species. |

| Color images of tree leaves - a 30-class post-processed subset from the LeafSnap dataset for automatic identification of North American tree species. |  | [LeafSnap30 dataset generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/dataset_preparation/LeafSnap/) |

| [LeafSnap30 dataset generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/dataset_preparation/LeafSnap/) |

### Text

| Dataset | Description | Examples | Generation |

| :--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | :---------------------------------------------------------------------------- | :--------------------------------------------------------------- | :------------------------------------------------------------------ |

| [Stanford sentiment treebank](https://nlp.stanford.edu/sentiment/index.html)  | Dataset for predicting the sentiment, positive or negative, of movie reviews. | _This movie was actually neither that funny, nor super witty._ | [Sentiment treebank](https://nlp.stanford.edu/sentiment/treebank.html) |

| Dataset for predicting the sentiment, positive or negative, of movie reviews. | _This movie was actually neither that funny, nor super witty._ | [Sentiment treebank](https://nlp.stanford.edu/sentiment/treebank.html) |

### Time series

| Dataset | Description | Examples | Generation |

| :--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------- | :--------------------------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------ |

| Coffee dataset  | Food spectographs time series dataset for a two class problem to distinguish between Robusta and Arabica coffee beans. |

| Food spectographs time series dataset for a two class problem to distinguish between Robusta and Arabica coffee beans. | ) | [data source](https://github.com/QIBChemometrics/Benchtop-NMR-Coffee-Survey) |

| [data source](https://github.com/QIBChemometrics/Benchtop-NMR-Coffee-Survey) |

| [Weather dataset](https://zenodo.org/record/7525955)  | The light version of the weather prediciton dataset, which contains daily observations (89 features) for 11 European locations through the years 2000 to 2010. |

| The light version of the weather prediciton dataset, which contains daily observations (89 features) for 11 European locations through the years 2000 to 2010. | ) | [data source](https://github.com/florian-huber/weather_prediction_dataset) |

| [data source](https://github.com/florian-huber/weather_prediction_dataset) |

### Tabular

| Dataset | Description | Examples | Generation |

| :--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------------------------------------------------- | :--------------------------------------------------------------------------------------------------------------------------------------- | :------------------------------------------------------------------------ |

| [Pengiun dataset](https://www.kaggle.com/code/parulpandey/penguin-dataset-the-new-iris) ) | Palmer Archipelago (Antarctica) penguin dataset is a great intro dataset for data exploration & visualization similar to the famous Iris dataset. |

| Palmer Archipelago (Antarctica) penguin dataset is a great intro dataset for data exploration & visualization similar to the famous Iris dataset. |  | [data source](https://github.com/allisonhorst/palmerpenguins) |

| [data source](https://github.com/allisonhorst/palmerpenguins) |

| [Weather dataset](https://zenodo.org/record/7525955)  | The light version of the weather prediciton dataset, which contains daily observations (89 features) for 11 European locations through the years 2000 to 2010. |

| The light version of the weather prediciton dataset, which contains daily observations (89 features) for 11 European locations through the years 2000 to 2010. | ) | [data source](https://github.com/florian-huber/weather_prediction_dataset) |

| [data source](https://github.com/florian-huber/weather_prediction_dataset) |

## ONNX models

**We work with ONNX!** ONNX is a great unified neural network standard which can be used to boost reproducible science. Using ONNX for your model also gives you a boost in performance! In case your models are still in another popular DNN (deep neural network) format, here are some simple recipes to convert them:

* [pytorch and pytorch-lightning](https://github.com/dianna-ai/dianna/blob/main/tutorials/conversion_onnx/pytorch2onnx.ipynb) - use the built-in [`torch.onnx.export`](https://pytorch.org/docs/stable/onnx.html) function to convert pytorch models to onnx, or call the built-in [`to_onnx`](https://pytorch-lightning.readthedocs.io/en/latest/deploy/production_advanced.html) function on your [`LightningModule`](https://lightning.ai/docs/pytorch/latest/api/lightning.pytorch.core.LightningModule.html#lightning.pytorch.core.LightningModule) to export pytorch-lightning models to onnx.

* [tensorflow](https://github.com/dianna-ai/dianna/blob/main/tutorials/conversion_onnx/tensorflow2onnx.ipynb) - use the [`tf2onnx`](https://github.com/onnx/tensorflow-onnx) package to convert tensorflow models to onnx.

* [keras](https://github.com/dianna-ai/dianna/blob/main/tutorials/conversion_onnx/keras2onnx.ipynb) - same as the conversion from tensorflow to onnx, the [`tf2onnx`](https://github.com/onnx/tensorflow-onnx) package also supports keras.

* [scikit-learn](https://github.com/dianna-ai/dianna/blob/main/tutorials/conversion_onnx/skl2onnx.ipynb) - use the [`skl2onnx`](https://github.com/onnx/sklearn-onnx) package to scikit-learn models to onnx.

More converters with examples and tutorials can be found on the [ONNX tutorial page](https://github.com/onnx/tutorials).

And here are links to notebooks showing how we created our models on the benchmark datasets:

### Images

| Models | Generation |

| :-------------------------------------------------------- | :--------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Binary MNIST model](https://zenodo.org/record/5907177) | [Binary MNIST model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/MNIST/generate_model_binary.ipynb) |

| [Simple Geometric model](https://zenodo.org/deposit/5907059) | [Simple geometric shapes model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/geometric_shapes/generate_model.ipynb) |

| [Simple Scientific model](https://zenodo.org/record/5907196) | [LeafSnap30 model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/LeafSnap/generate_model.ipynb) |

### Text

| Models | Generation |

| :---------------------------------------------------- | :---------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Movie reviews model](https://zenodo.org/record/5910598) | [Stanford sentiment treebank model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/movie_reviews/generate_model.ipynb) |

### Time series

| Models | Generation |

| :-------------------------------------------------------- | :---------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Coffee model](https://zenodo.org/records/10579458) | [Coffee model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/coffee/generate_model.ipynb) |

| [Season prediction model](https://zenodo.org/record/7543883) | [Season prediction model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/season_prediction/generate_model.ipynb) |

| [Fast Radio Burst classification model](https://zenodo.org/records/10656614) | [Fast Radio Burst classification model generation](https://doi.org/10.3847/1538-3881/aae649) |

### Tabular

| Models | Generation |

| :-------------------------------------------------------- | :---------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Penguin model (classification)](https://zenodo.org/records/10580743) | [Penguin model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/penguin_species/generate_model.ipynb) |

| [Sunshine hours prediction model (regression)](https://zenodo.org/records/10580833) | [Sunshine hours prediction model generation](https://github.com/dianna-ai/dianna-exploration/blob/main/example_data/model_generation/sunshine_prediction/generate_model.ipynb) |

**_We envision the birth of the ONNX Scientific models zoo soon..._**

## Tutorials

DIANNA supports different data modalities and XAI methods (explainers). We have evaluated many explainers using objective criteria (see the [How to find your AI explainer](https://blog.esciencecenter.nl/how-to-find-your-artificial-intelligence-explainer-dbb1ac608009) blog-post). The table below contains links to the relevant XAI method's papers (for some explanatory videos on the methods, please see [tutorials](./tutorials)). The DIANNA [tutorials](./tutorials) cover each supported method and data modality on a least one dataset using the default or tuned [hyperparameters](./tutorials/README.md#important-hyperparameters). Our plans to expand DIANNA with more data modalities and explainers are given in the [ROADMAP](https://dianna.readthedocs.io/en/latest/ROADMAP.html).

| Data \ XAI | [RISE](http://bmvc2018.org/contents/papers/1064.pdf) | [LIME](https://www.kdd.org/kdd2016/papers/files/rfp0573-ribeiroA.pdf) | [KernelSHAP](https://proceedings.neurips.cc/paper/2017/file/8a20a8621978632d76c43dfd28b67767-Paper.pdf) |

| :--------- | :------------------------------------------------ | :----------------------------------------------------------------- | :--------------------------------------------------------------------------------------------------- |

| Images | ✅ | ✅ | ✅ |

| Text | ✅ | ✅ | |

| Timeseries | ✅ | ✅ | |

| Tabular | planned | ✅ | ✅ |

| Embedding | work in progress | |

| Graphs* | next steps | ... | ... |

[LRP](https://journals.plos.org/plosone/article/file?id=10.1371/journal.pone.0130140&type=printable) and [PatternAttribution](https://arxiv.org/pdf/1705.05598.pdf) also feature in the top 5 of our thoroughly evaluated explainers.

Also [GradCAM](https://openaccess.thecvf.com/content_ICCV_2017/papers/Selvaraju_Grad-CAM_Visual_Explanations_ICCV_2017_paper.pdf)) has been recently found to be *semantically continous*! **Contributing by adding these and more (new) post-hoc explainability methods on ONNX models is very welcome!**

### Scientific use-cases

Our goal is that the scientific community embrases XAI as a source for novel and unexplored perspectives on scientific problems.

Here, we offer [tutorials](./tutorials) on specific scientific use-cases of uisng XAI:

| Use-case (data) \ XAI | [RISE](http://bmvc2018.org/contents/papers/1064.pdf) | [LIME](https://www.kdd.org/kdd2016/papers/files/rfp0573-ribeiroA.pdf) | [KernelSHAP](https://proceedings.neurips.cc/paper/2017/file/8a20a8621978632d76c43dfd28b67767-Paper.pdf) |

| :--------- | :-------- | :------------------------------ | :-------------------------- |

| Biology (Phytomorphology): Tree Leaves classification (images) | | ✅ | |

| Astronomy: Fast Radio Burst detection (timeseries) | ✅ | | |

| Geo-science (raster data) | planned | ... | ... | ... |

| Social sciences (text) | work in progress | ... |... | ... |

| Climate | planned | ... | ... | ... |

## Reference documentation

For detailed information on using specific DIANNA functions, please visit the [documentation page hosted at Readthedocs](https://dianna.readthedocs.io/en/latest).

## Contributing

If you want to contribute to the development of DIANNA,

have a look at the [contribution guidelines](https://dianna.readthedocs.io/en/latest/CONTRIBUTING.html).

See our [developer documentation](docs/developer_info.rst) for information on developer installation, running tests, generating documentation, versioning and making a release.

## How to cite us

[](https://zenodo.org/record/5592606)

[](https://www.research-software.nl/software/dianna)

If you use this package for your scientific work, please consider citing directly the software as:

Ranguelova, E., Bos, P., Liu, Y., Meijer, C., Oostrum, L., Crocioni, G., Ootes, L., Chandramouli, P., Jansen, A., Smeets, S. (2023). dianna (*[VERSION YOU USED]*). Zenodo. https://zenodo.org/record/5592606

or the JOSS paper as:

Ranguelova et al., (2022). DIANNA: Deep Insight And Neural Network Analysis. Journal of Open Source Software, 7(80), 4493, https://doi.org/10.21105/joss.04493

See also the [Zenodo page](https://zenodo.org/record/5592606) or the [JOSS page](https://joss.theoj.org/papers/10.21105/joss.04493) for exporting the software citation to BibTteX and other formats.

## Credits

This package was created with [Cookiecutter](https://github.com/audreyr/cookiecutter) and the [NLeSC/python-template](https://github.com/NLeSC/python-template).