Ecosyste.ms: Awesome

An open API service indexing awesome lists of open source software.

https://github.com/TonyLianLong/LLM-groundedVideoDiffusion

LLM-grounded Video Diffusion Models (LVD): official implementation for the LVD paper

https://github.com/TonyLianLong/LLM-groundedVideoDiffusion

diffusion diffusion-models large-language-models llm text-to-image text-to-video text-to-video-generation video-generation

Last synced: 4 months ago

JSON representation

LLM-grounded Video Diffusion Models (LVD): official implementation for the LVD paper

- Host: GitHub

- URL: https://github.com/TonyLianLong/LLM-groundedVideoDiffusion

- Owner: TonyLianLong

- Created: 2023-10-02T17:15:55.000Z (9 months ago)

- Default Branch: main

- Last Pushed: 2023-10-02T17:30:34.000Z (9 months ago)

- Last Synced: 2023-10-02T23:32:56.056Z (9 months ago)

- Topics: diffusion, diffusion-models, large-language-models, llm, text-to-image, text-to-video, text-to-video-generation, video-generation

- Homepage: https://llm-grounded-video-diffusion.github.io/

- Size: 1000 Bytes

- Stars: 3

- Watchers: 1

- Forks: 0

- Open Issues: 0

-

Metadata Files:

- Readme: README.md

Lists

- awesome-diffusion-categorized - [Code

README

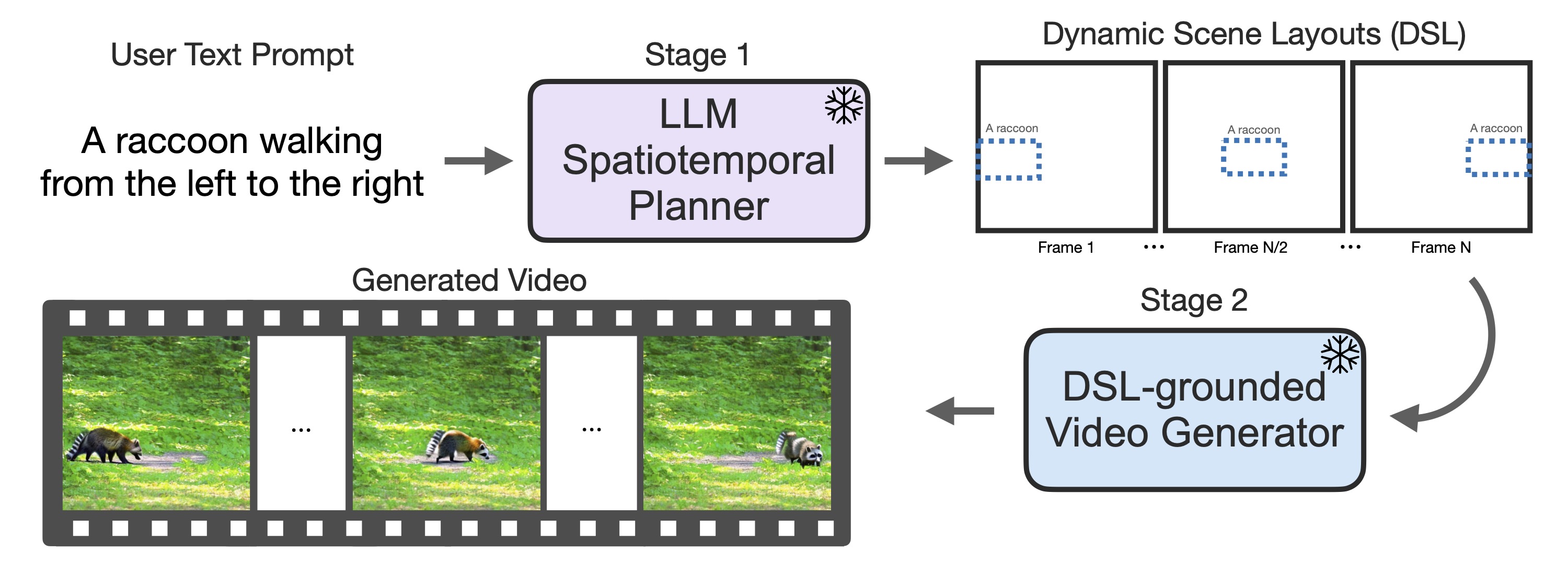

# LLM-grounded Diffusion: Enhancing Prompt Understanding of Text-to-Image Diffusion Models with Large Language Models

[Long Lian](https://tonylian.com/), [Baifeng Shi](https://bfshi.github.io/), [Adam Yala](https://www.adamyala.org/), [Trevor Darrell](https://people.eecs.berkeley.edu/~trevor/), [Boyi Li](https://sites.google.com/site/boyilics/home) at UC Berkeley/UCSF.

[Paper](https://arxiv.org/abs/2309.17444) | [Project Page](https://llm-grounded-video-diffusion.github.io/) | [HuggingFace Demo (coming soon)](#) | [Related Project: LMD](https://llm-grounded-diffusion.github.io/) | [Citation](#citation)

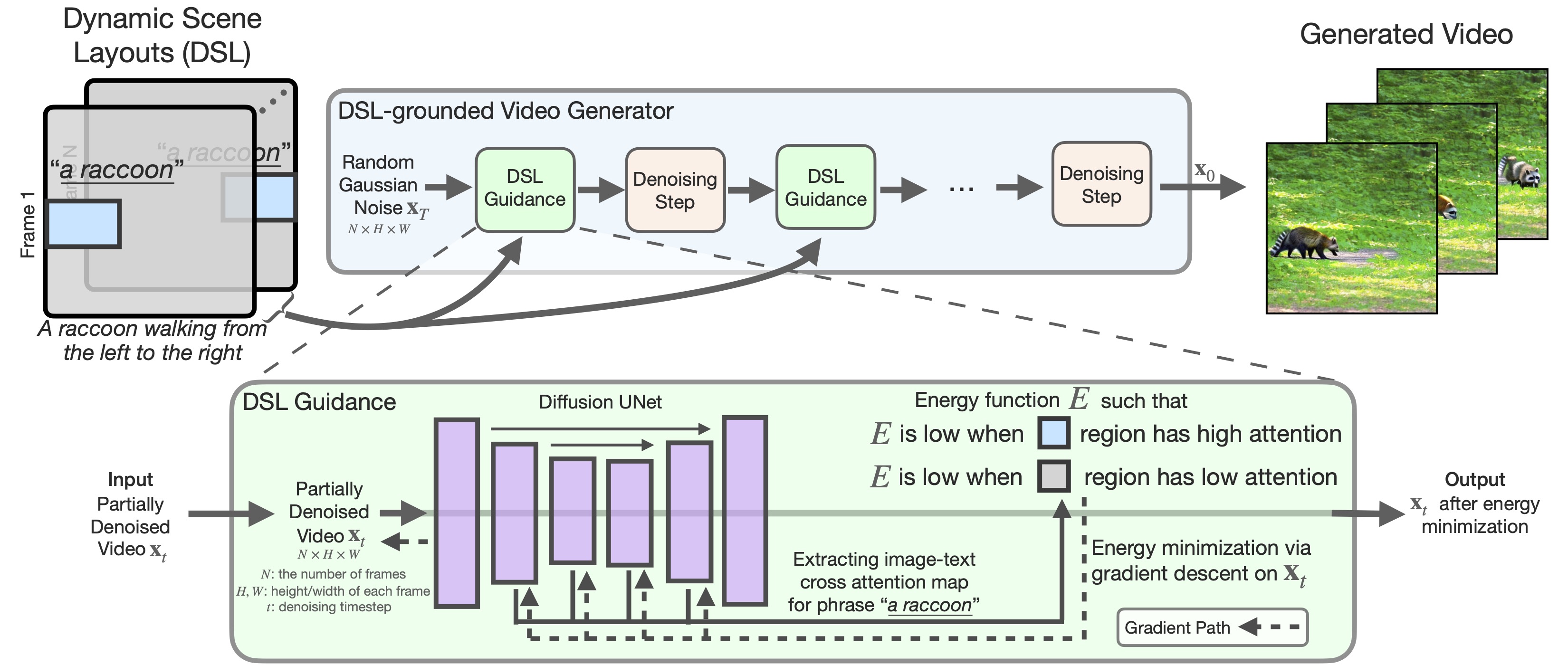

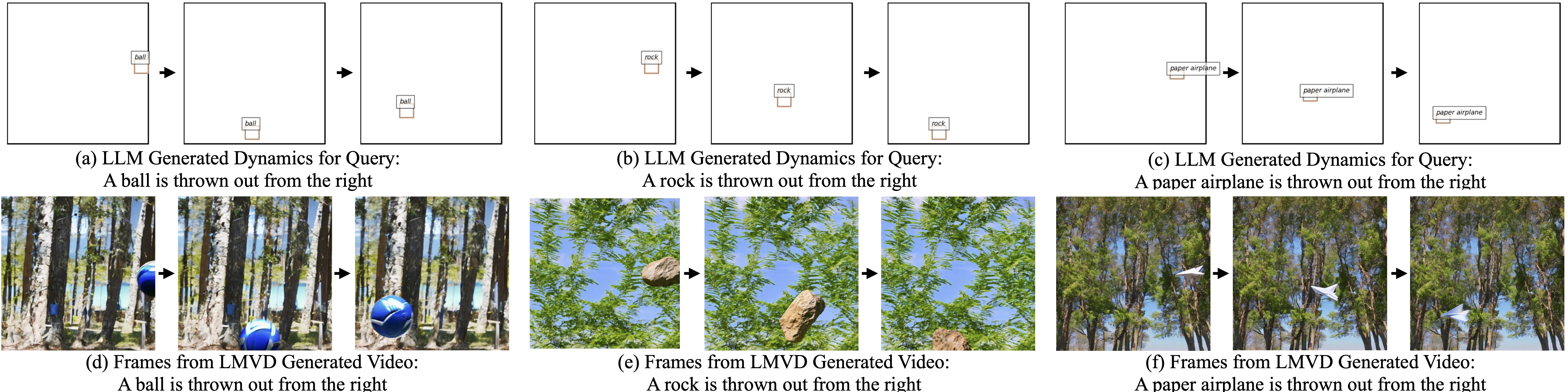

Our DSL-grounded Video Generator:

LLM generates dynamic scene layouts, taking the world properties (e.g., gravity, elasticity, air friction) into account:

LLM generates dynamic scene layouts, taking the camera properties (e.g., perspective projection) into account:

We propose a benchmark of five tasks. Our method improves on all five tasks without specifically aiming for each one:

## Code

The code is coming soon! Meanwhile, give this repo a star to support us!

## Contact us

Please contact Long (Tony) Lian if you have any questions: `[email protected]`.

## Citation

If you use our work or our implementation in this repo, or find them helpful, please consider giving a citation.

```

@article{lian2023llmgroundedvideo,

title={LLM-grounded Video Diffusion Models},

author={Lian, Long and Shi, Baifeng and Yala, Adam and Darrell, Trevor and Li, Boyi},

journal={arXiv preprint arXiv:2309.17444},

year={2023},

}

@article{lian2023llmgrounded,

title={LLM-grounded Diffusion: Enhancing Prompt Understanding of Text-to-Image Diffusion Models with Large Language Models},

author={Lian, Long and Li, Boyi and Yala, Adam and Darrell, Trevor},

journal={arXiv preprint arXiv:2305.13655},

year={2023}

}

```