https://github.com/eric-canas/qreader

Robust and Straight-Forward solution for reading difficult and tricky QR codes within images in Python. Powered by YOLOv8

https://github.com/eric-canas/qreader

computer-vision easy-to-use image-processing object-detection pip python pytorch pyzbar qr qrcode qrcode-reader qrcode-scanner yolov8

Last synced: 10 months ago

JSON representation

Robust and Straight-Forward solution for reading difficult and tricky QR codes within images in Python. Powered by YOLOv8

- Host: GitHub

- URL: https://github.com/eric-canas/qreader

- Owner: Eric-Canas

- License: mit

- Created: 2022-12-03T14:37:04.000Z (over 3 years ago)

- Default Branch: main

- Last Pushed: 2025-02-22T19:47:50.000Z (about 1 year ago)

- Last Synced: 2025-05-12T02:53:43.892Z (11 months ago)

- Topics: computer-vision, easy-to-use, image-processing, object-detection, pip, python, pytorch, pyzbar, qr, qrcode, qrcode-reader, qrcode-scanner, yolov8

- Language: Python

- Homepage:

- Size: 38.7 MB

- Stars: 268

- Watchers: 7

- Forks: 28

- Open Issues: 8

-

Metadata Files:

- Readme: README.md

- Funding: .github/FUNDING.yml

- License: LICENSE

Awesome Lists containing this project

README

# QReader

**QReader** is a **Robust** and **Straight-Forward** solution for reading **difficult** and **tricky** **QR** codes within images in **Python**. Powered by a YOLOv8 model.

**QReader** is a **Robust** and **Straight-Forward** solution for reading **difficult** and **tricky** **QR** codes within images in **Python**. Powered by a YOLOv8 model.

Behind the scenes, the library is composed by two main building blocks: A YOLOv8 **QR Detector** model trained to **detect** and **segment** QR codes (also offered as stand-alone), and the Pyzbar **QR Decoder**. Using the information extracted from this **QR Detector**, **QReader** transparently applies, on top of Pyzbar, different image preprocessing techniques that maximize the **decoding** rate on difficult images.

## Installation

To install **QReader**, simply run:

```bash

pip install qreader

```

You may need to install some additional **pyzbar** dependencies:

On **Windows**:

Rarely, you can see an ugly ImportError related with `lizbar-64.dll`. If it happens, install the [vcredist_x64.exe](https://www.microsoft.com/en-gb/download/details.aspx?id=40784) from the _Visual C++ Redistributable Packages for Visual Studio 2013_

On **Linux**:

```bash

sudo apt-get install libzbar0

```

On **Mac OS X**:

```bash

brew install zbar

```

To install the QReader package locally, run pip

```bash

python -m pip install --editable .

```

**NOTE:** If you're running **QReader** in a server with very limited resources, you may want to install the **CPU** version of [**PyTorch**](https://pytorch.org/get-started/locally/), before installing **QReader**. To do so, run: ``pip install torch --no-cache-dir`` (Thanks to [**@cjwalther**](https://github.com/Eric-Canas/QReader/issues/5) for his advice).

**QReader** is a very simple and straight-forward library. For most use cases, you'll only need to call ``detect_and_decode``:

```python

from qreader import QReader

import cv2

# Create a QReader instance

qreader = QReader()

# Get the image that contains the QR code

image = cv2.cvtColor(cv2.imread("path/to/image.png"), cv2.COLOR_BGR2RGB)

# Use the detect_and_decode function to get the decoded QR data

decoded_text = qreader.detect_and_decode(image=image)

```

``detect_and_decode`` will return a `tuple` containing the decoded _string_ of every **QR** found in the image.

> **NOTE**: Some entries can be `None`, it will happen when a **QR** have been detected but **couldn't be decoded**.

## API Reference

### QReader(model_size = 's', min_confidence = 0.5, reencode_to = 'shift-jis', weights_folder = None)

This is the main class of the library. Please, try to instantiate it just once to avoid loading the model every time you need to detect a **QR** code.

- ``model_size``: **str**. The size of the model to use. It can be **'n'** (nano), **'s'** (small), **'m'** (medium) or **'l'** (large). Larger models could be more accurate but slower. Recommended: **'s'** ([#37](https://github.com/Eric-Canas/QReader/issues/37)). Default: 's'.

- ``min_confidence``: **float**. The minimum confidence of the QR detection to be considered valid. Values closer to 0.0 can get more _False Positives_, while values closer to 1.0 can lose difficult QRs. Default (and recommended): 0.5.

- ``reencode_to``: **str** | **None**. The encoding to reencode the `utf-8` decoded QR string. If None, it won't re-encode. If you find some characters being decoded incorrectly, try to set a [Code Page](https://learn.microsoft.com/en-us/windows/win32/intl/code-page-identifiers) that matches your specific charset. Recommendations that have been found useful:

- 'shift-jis' for Germanic languages

- 'cp65001' for Asian languages (Thanks to @nguyen-viet-hung for the suggestion)

- ``weights_folder``: **str|None**. Folder where the detection model will be downloaded. If None, it will be downloaded to the default qrdet package internal folder, making sure that it gets correctly removed when uninstalling. You could need to change it when working in environments like [AWS Lambda](https://aws.amazon.com/es/pm/lambda/) where only [/tmp folder](https://docs.aws.amazon.com/lambda/latest/api/API_EphemeralStorage.html) is writable, as issued in [#21](https://github.com/Eric-Canas/QReader/issues/21). Default: `None` (_/.model_).

### QReader.detect_and_decode(image, return_detections = False)

This method will decode the **QR** codes in the given image and return the decoded _strings_ (or _None_, if any of them was detected but not decoded).

- ``image``: **np.ndarray**. The image to be read. It is expected to be _RGB_ or _BGR_ (_uint8_). Format (_HxWx3_).

- ``return_detections``: **bool**. If `True`, it will return the full detection results together with the decoded QRs. If False, it will return only the decoded content of the QR codes.

- ``is_bgr``: **boolean**. If `True`, the received image is expected to be _BGR_ instead of _RGB_.

- **Returns**: **tuple[str | None] | tuple[tuple[dict[str, np.ndarray | float | tuple[float | int, float | int]]], str | None]]**: A tuple with all detected **QR** codes decodified. If ``return_detections`` is `False`, the output will look like: `('Decoded QR 1', 'Decoded QR 2', None, 'Decoded QR 4', ...)`. If ``return_detections`` is `True` it will look like: `(('Decoded QR 1', {'bbox_xyxy': (x1_1, y1_1, x2_1, y2_1), 'confidence': conf_1}), ('Decoded QR 2', {'bbox_xyxy': (x1_2, y1_2, x2_2, y2_2), 'confidence': conf_2, ...}), ...)`. Look [QReader.detect()](#QReader_detect_table) for more information about detections format.

### QReader.detect(image)

This method detects the **QR** codes in the image and returns a _tuple of dictionaries_ with all the detection information.

- ``image``: **np.ndarray**. The image to be read. It is expected to be _RGB_ or _BGR_ (_uint8_). Format (_HxWx3_).

- ``is_bgr``: **boolean**. If `True`, the received image is expected to be _BGR_ instead of _RGB_.

- **Returns**: **tuple[dict[str, np.ndarray|float|tuple[float|int, float|int]]]**. A tuple of dictionaries containing all the information of every detection. Contains the following keys.

| Key | Value Desc. | Value Type | Value Form |

|------------------|---------------------------------------------|----------------------------|-----------------------------|

| `confidence` | Detection confidence | `float` | `conf.` |

| `bbox_xyxy` | Bounding box | np.ndarray (**4**) | `[x1, y1, x2, y2]` |

| `cxcy` | Center of bounding box | tuple[`float`, `float`] | `(x, y)` |

| `wh` | Bounding box width and height | tuple[`float`, `float`] | `(w, h)` |

| `polygon_xy` | Precise polygon that segments the _QR_ | np.ndarray (**N**, **2**) | `[[x1, y1], [x2, y2], ...]` |

| `quad_xy` | Four corners polygon that segments the _QR_ | np.ndarray (**4**, **2**) | `[[x1, y1], ..., [x4, y4]]` |

| `padded_quad_xy` |`quad_xy` padded to fully cover `polygon_xy` | np.ndarray (**4**, **2**) | `[[x1, y1], ..., [x4, y4]]` |

| `image_shape` | Shape of the input image | tuple[`int`, `int`] | `(h, w)` |

> **NOTE:**

> - All `np.ndarray` values are of type `np.float32`

> - All keys (except `confidence` and `image_shape`) have a normalized ('n') version. For example,`bbox_xyxy` represents the bbox of the QR in image coordinates [[0., im_w], [0., im_h]], while `bbox_xyxyn` contains the same bounding box in normalized coordinates [0., 1.].

> - `bbox_xyxy[n]` and `polygon_xy[n]` are clipped to `image_shape`. You can use them for indexing without further management

**NOTE**: Is this the only method you will need? Take a look at QRDet.

### QReader.decode(image, detection_result)

This method decodes a single **QR** code on the given image, described by a detection result.

Internally, this method will run the pyzbar decoder, using the information of the `detection_result`, to apply different image preprocessing techniques that heavily increase the detecoding rate.

- ``image``: **np.ndarray**. NumPy Array with the ``image`` that contains the _QR_ to decode. The image is expected to be in ``uint8`` format [_HxWxC_], RGB.

- ``detection_result``: dict[str, np.ndarray|float|tuple[float|int, float|int]]. One of the **detection dicts** returned by the **detect** method. Note that [QReader.detect()](#QReader_detect) returns a `tuple` of these `dict`. This method expects just one of them.

- Returns: **str | None**. The decoded content of the _QR_ code or `None` if it couldn't be read.

## Usage Tests

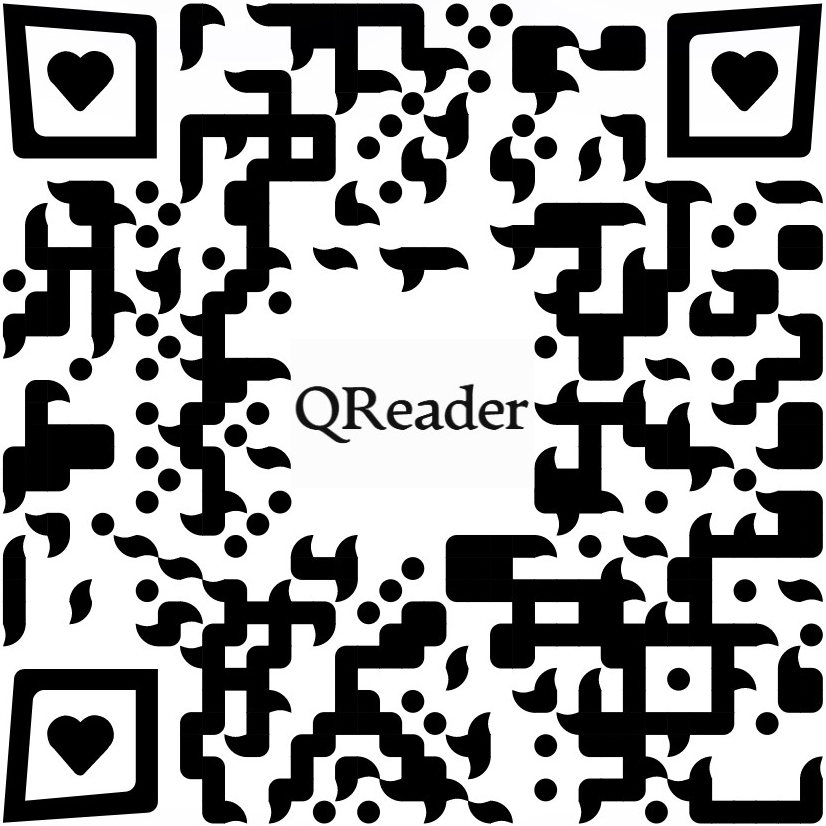

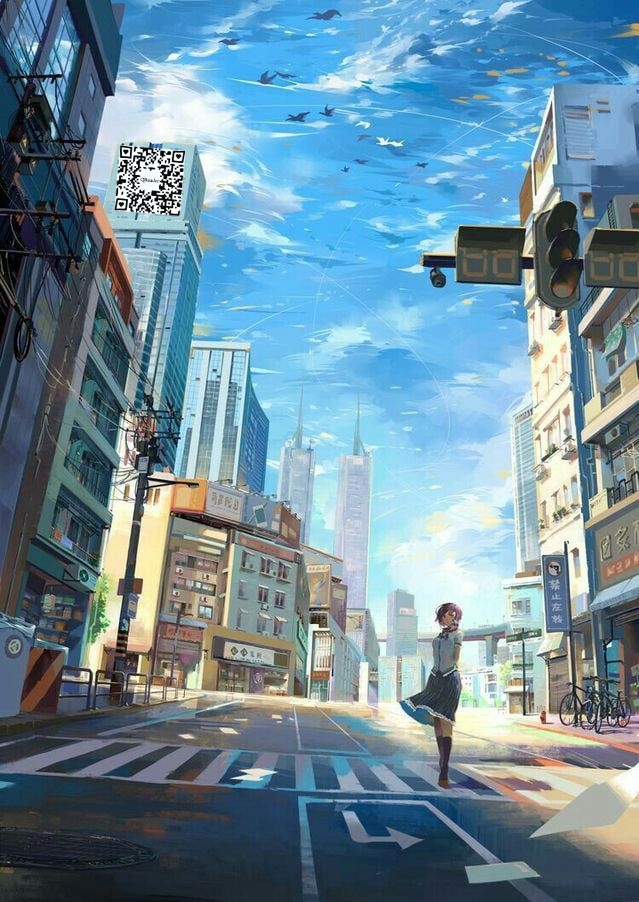

Two sample images. At left, an image taken with a mobile phone. At right, a 64x64 QR pasted over a drawing.

The following code will try to decode these images containing QRs with **QReader**, pyzbar and OpenCV.

```python

from qreader import QReader

from cv2 import QRCodeDetector, imread

from pyzbar.pyzbar import decode

# Initialize the three tested readers (QRReader, OpenCV and pyzbar)

qreader_reader, cv2_reader, pyzbar_reader = QReader(), QRCodeDetector(), decode

for img_path in ('test_mobile.jpeg', 'test_draw_64x64.jpeg'):

# Read the image

img = imread(img_path)

# Try to decode the QR code with the three readers

qreader_out = qreader_reader.detect_and_decode(image=img)

cv2_out = cv2_reader.detectAndDecode(img=img)[0]

pyzbar_out = pyzbar_reader(image=img)

# Read the content of the pyzbar output (double decoding will save you from a lot of wrongly decoded characters)

pyzbar_out = tuple(out.data.data.decode('utf-8').encode('shift-jis').decode('utf-8') for out in pyzbar_out)

# Print the results

print(f"Image: {img_path} -> QReader: {qreader_out}. OpenCV: {cv2_out}. pyzbar: {pyzbar_out}.")

```

The output of the previous code is:

```txt

Image: test_mobile.jpeg -> QReader: ('https://github.com/Eric-Canas/QReader'). OpenCV: . pyzbar: ().

Image: test_draw_64x64.jpeg -> QReader: ('https://github.com/Eric-Canas/QReader'). OpenCV: . pyzbar: ().

```

Note that **QReader** internally uses pyzbar as **decoder**. The improved **detection-decoding rate** that **QReader** achieves comes from the combination of different image pre-processing techniques and the YOLOv8 based **QR** detector that is able to detect **QR** codes in harder conditions than classical _Computer Vision_ methods.

## Running tests

The tests can be launched via pytest. Make sure you install the test version of the package

```bash

python -m pip install --editable ".[test]"

```

Then, you can run the tests with

```bash

python -m pytest tests/

```

## Benchmark

### Rotation Test

| Method | Max Rotation Degrees |

|---------|-----------------------|

| Pyzbar | 17º |

| OpenCV | 46º |

| QReader | 79º |

## Star History