https://github.com/leptonai/gpud

GPUd automates monitoring, diagnostics, and issue identification for GPUs

https://github.com/leptonai/gpud

gpu kubernetes monitoring nvidia nvidia-gpu

Last synced: 9 days ago

JSON representation

GPUd automates monitoring, diagnostics, and issue identification for GPUs

- Host: GitHub

- URL: https://github.com/leptonai/gpud

- Owner: leptonai

- License: apache-2.0

- Created: 2024-08-16T15:32:11.000Z (over 1 year ago)

- Default Branch: main

- Last Pushed: 2026-02-24T14:46:00.000Z (15 days ago)

- Last Synced: 2026-02-24T15:45:10.230Z (15 days ago)

- Topics: gpu, kubernetes, monitoring, nvidia, nvidia-gpu

- Language: Go

- Homepage: https://gpud.ai

- Size: 36.6 MB

- Stars: 476

- Watchers: 10

- Forks: 60

- Open Issues: 7

-

Metadata Files:

- Readme: README.md

- Contributing: CONTRIBUTING.md

- License: LICENSE

- Security: SECURITY.md

- Notice: NOTICE

Awesome Lists containing this project

- awesome-repositories - leptonai/gpud - GPUd automates monitoring, diagnostics, and issue identification for GPUs (Go)

README

[](https://goreportcard.com/report/github.com/leptonai/gpud)

[](https://pkg.go.dev/github.com/leptonai/gpud)

[](https://codecov.io/gh/leptonai/gpud)

## Overview

[GPUd](https://www.gpud.ai) is designed to ensure GPU efficiency and reliability by actively monitoring GPUs and effectively managing AI/ML workloads.

## Why GPUd

GPUd is built on years of experience operating large-scale GPU clusters at Meta, Alibaba Cloud, Uber, and Lepton AI. It is carefully designed to be self-contained and to integrate seamlessly with other systems such as Docker, containerd, Kubernetes, and NVIDIA ecosystems.

- **First-class GPU support**: GPUd is GPU-centric, providing a unified view of critical GPU metrics and issues.

- **Easy to run at scale**: GPUd is a self-contained binary that runs on any machine with a low footprint.

- **Production grade**: GPUd is used in [DGX Cloud Lepton](https://www.nvidia.com/en-us/data-center/dgx-cloud-lepton/)'s production infrastructure.

Most importantly, GPUd operates with minimal CPU and memory overhead in a non-critical path and requires only read-only operations. See [*architecture*](./docs/ARCHITECTURE.md) for more details.

## Get Started

The fastest way to see `gpud` in action is to watch our 40-second demo video below. For more detailed guides, see our [Tutorials page](./docs/TUTORIALS.md).

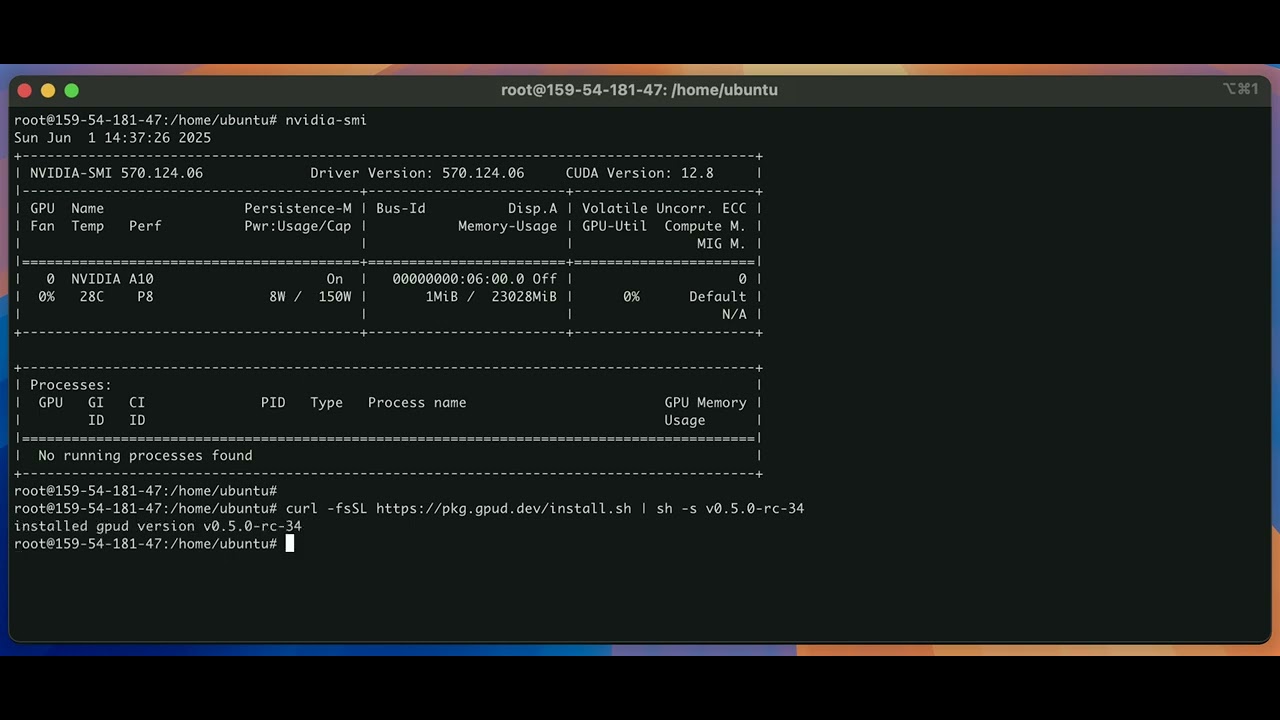

### Installation

To install from the official release on Linux amd64 (x86_64) machine:

```bash

curl -fsSL https://pkg.gpud.dev/install.sh | sh

```

To install the latest published version explicitly:

```bash

curl -fsSL https://pkg.gpud.dev/install.sh | sh -s $(curl -fsSL https://pkg.gpud.dev/unstable_latest.txt)

```

The install script also currently support other architectures (e.g., arm64) and OSes (e.g., macOS).

---

### Run GPUd on a Host

This section covers running `gpud` directly on a host machine.

#### Resource Requirements (for Lepton Platform)

If you plan to join the Lepton platform (using the `--token` flag), your node must meet these minimum requirements:

**Minimum:**

- **3 CPU cores** (2-core instances will fail to join — kubelet and system pods require minimum 3 cores)

- 4 GiB memory

**Recommended:**

- 4+ CPU cores (e.g., AWS c6a.xlarge)

- 8+ GiB memory

**Why these requirements:** GPUd periodically reads system files from `/sys/class/infiniband/`, `/proc/`, and other paths to collect telemetry data. On nodes with less than 4 GiB memory, the Linux page cache cannot retain these files between polling cycles, causing every read to hit the disk and resulting in excessive I/O (measured at 5+ MB/s on 2 GiB nodes vs. 0 MB/s on larger nodes). The 4 GiB minimum ensures sufficient page cache for GPUd to operate as a lightweight daemon without causing disk I/O pressure.

For complete hardware, software, and network requirements, see the official [NVIDIA DGX Cloud Lepton BYOC Requirements](https://docs.nvidia.com/dgx-cloud/lepton/compute/bring-your-own-compute/requirements/).

> **Note:** These requirements apply only when joining the Lepton platform; standalone `gpud` operation has lower requirements.

#### With `systemd` (Recommended for Linux)

**Start the service:**

```bash

sudo gpud up [--token ]

```

> **Note:** The optional `--token` connects `gpud` to the Lepton Platform. You can get a token from the [Settings > Tokens page](https://dashboard.dgxc-lepton.nvidia.com) on your dashboard.

```bash

gpud up \

--token \

--node-group

```

**Stop the service:**

```bash

sudo gpud down

```

**Uninstall:**

```bash

sudo rm /usr/local/bin/gpud

sudo rm /etc/systemd/system/gpud.service

```

#### Without `systemd` (e.g., macOS)

**Run in the foreground:**

```bash

gpud run [--token ]

```

**Run in the background:**

```bash

nohup sudo /usr/local/bin/gpud run [--token ] &>> &

```

**Uninstall:**

```bash

sudo rm /usr/local/bin/gpud

```

---

### Run GPUd with Kubernetes

The recommended way to deploy GPUd on Kubernetes is with our official [Helm chart](./deployments/helm/gpud/README.md).

### Build with Docker

A Dockerfile is provided to build a container image from source. For complete instructions, please see our [Docker guide in CONTRIBUTING.md](CONTRIBUTING.md#building-with-docker).

---

## Key Features

- Monitor critical GPU and GPU fabric metrics (power, temperature).

- Reports GPU and GPU fabric status (nvidia-smi parser, error checking).

- Detects critical GPU and GPU fabric errors (kmsg, hardware slowdown, NVML Xid event, DCGM).

- Monitor overall system metrics (CPU, memory, disk).

Check out [*components*](./docs/COMPONENTS.md) for a detailed list of components and their features.

## Integration

For users looking to set up a platform to collect and process data from gpud, please refer to [INTEGRATION](./docs/INTEGRATION.md).

## FAQs

### Does GPUd send data to lepton.ai?

GPUd collects a small anonymous usage signal by default to help the engineering team better understand usage frequencies. The data is strictly anonymized and **does not contain any sensitive data**. You can disable this behavior by setting `GPUD_NO_USAGE_STATS=true`. If GPUd is run with systemd (default option for the `gpud up` command), you can add the line `GPUD_NO_USAGE_STATS=true` to the `/etc/default/gpud` environment file and restart the service.

If you opt-in to log in to the Lepton AI platform, to assist you with more helpful GPU health states, GPUd periodically sends system runtime related information about the host to the platform. All these info are system workload and health info, and contain no user data. The data are sent via secure channels.

### How to update GPUd?

GPUd is still in active development, regularly releasing new versions for critical bug fixes and new features. We strongly recommend always being on the latest version of GPUd.

When GPUd is registered with the Lepton platform, the platform will automatically update GPUd to the latest version. To disable such auto-updates, if GPUd is run with `systemd` (default option for the `gpud up` command), you may add the flag `FLAGS="--enable-auto-update=false"` to the `/etc/default/gpud` environment file and restart the service.

## Learn more

- [Why GPUd](./docs/WHY.md)

- [Install GPUd](./docs/INSTALL.md)

- [GPUd components](./docs/COMPONENTS.md)

- [GPUd architecture](./docs/ARCHITECTURE.md)

## Contributing

Please see the [CONTRIBUTING.md](CONTRIBUTING.md) for guidelines on how to contribute to this project.