https://github.com/promptfoo/promptfoo

Test your prompts, agents, and RAGs. Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration.

https://github.com/promptfoo/promptfoo

ci ci-cd cicd evaluation evaluation-framework llm llm-eval llm-evaluation llm-evaluation-framework llmops pentesting prompt-engineering prompt-testing prompts rag red-teaming testing vulnerability-scanners

Last synced: 3 months ago

JSON representation

Test your prompts, agents, and RAGs. Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration.

- Host: GitHub

- URL: https://github.com/promptfoo/promptfoo

- Owner: promptfoo

- License: mit

- Created: 2023-04-28T15:48:49.000Z (about 3 years ago)

- Default Branch: main

- Last Pushed: 2025-03-08T00:53:33.000Z (about 1 year ago)

- Last Synced: 2025-03-08T01:24:43.599Z (about 1 year ago)

- Topics: ci, ci-cd, cicd, evaluation, evaluation-framework, llm, llm-eval, llm-evaluation, llm-evaluation-framework, llmops, pentesting, prompt-engineering, prompt-testing, prompts, rag, red-teaming, testing, vulnerability-scanners

- Language: TypeScript

- Homepage: https://promptfoo.dev

- Size: 308 MB

- Stars: 5,756

- Watchers: 21

- Forks: 475

- Open Issues: 188

-

Metadata Files:

- Readme: README.md

- Contributing: CONTRIBUTING.md

- Funding: .github/FUNDING.yml

- License: LICENSE

- Citation: CITATION.cff

Awesome Lists containing this project

- awesome-x-ops - promptfoo - source CLI and platform for prompt testing, LLM evaluations, red teaming, and CI/CD regression checks. (LLM and Agent Observability)

- awesome-ChatGPT-repositories - promptfoo - Test your prompts, models, RAGs. Evaluate and compare LLM outputs, catch regressions, and improve prompt quality. LLM evals for OpenAI/Azure GPT, Anthropic Claude, VertexAI Gemini, Ollama, Local & private models like Mistral/Mixtral/Llama with CI/CD (Prompts)

- awesome-llm-tools - Promptfoo

- awesome-llm-services - Promptfoo

- StarryDivineSky - promptfoo/promptfoo

- awesome-opensource-ai - Promptfoo - Open-source LLM evaluation and red teaming framework. Test prompts, agents, and RAGs with automated security vulnerability scanning, side-by-side model comparison, and CI/CD integration. Now part of OpenAI. MIT licensed.  (8. MLOps / LLMOps & Production)

- awesome-mistral - Promptfoo - teaming. (Tooling & Dev Experience / Development Tools)

- Awesome-AI-Security - promptfoo

- AiTreasureBox - promptfoo/promptfoo - 03-23_18280_121](https://img.shields.io/github/stars/promptfoo/promptfoo.svg)|Test your prompts, agents, and RAGs. AI Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration.| (Repos)

- awesome-ai-ml-testing - promptfoo - Testing and evaluation framework for LLM prompts. (🤖 LLM & Chatbot Testing)

- Awesome-Jailbreak-on-LLMs - link

- awesome-harness-engineering - promptfoo - driven LLM testing framework with LLM-as-judge, assertion DSL, and native CI integration. The most practical tool for adding agent output regression tests to a PR pipeline without writing a test harness from scratch.  (Design Primitives / Verification & CI Integration)

- awesome-MLSecOps - Promptfoo Scanner - source LLM red teaming tool | (Open Source Security Tools)

- awesome-safety-critical-ai - `promptfoo/promptfoo` - friendly local tool for testing LLM applications (<a id="tools"></a>🛠️ Tools / Bleeding Edge ⚗️)

- Awesome-Prompt-Engineering - [Github

- awesome-gpt-security - promptfoo - LLM red teaming and evaluation framework. Includes modelaudit for scanning ML models for malicious code, backdoors, and serialization attacks. CI/CD integration (GPT Security / Standard)

- awesome-langchain-zh - Promptfoo

- awesome-ai-testing - Promptfoo - Test framework with LLM-as-judge for prompts, models, and RAG pipelines. (LLM-as-Judge Evaluation)

- AwesomeResponsibleAI - Promptfoo

- awesome-ai-offensive-security - Promptfoo - A developer-first framework for AI red teaming and evaluations with flexible configuration and Python integration. (AI Red Teaming (Testing AI Targets))

- awesome-killchain - Promptfoo

- awesome-ai-coding-tools - Promptfoo - source tool for testing, evaluating, and red-teaming LLM prompts and applications. (AI Frameworks and SDKs)

- awesome-production-machine-learning - Promptfoo - Promptfoo is a developer-friendly local tool for testing LLM applications. (Evaluation and Monitoring)

- awesome-claude-code-security - promptfoo - CLI for red-teaming LLM apps. Adaptive attack generation, CI/CD integration. Used by Shopify, Discord, Microsoft. ~6k stars. (💉 Prompt Injection and Agent Threats / Tools and Frameworks)

- Awesome-LLM-RAG-Application - promptfoo

- Awesome-LLM4Security - promptfoo

- awesome-machine-learning - promptfoo - Open-source LLM evaluation and red teaming framework. Test prompts, models, agents, and RAG pipelines. Run adversarial attacks (jailbreaks, prompt injection) and integrate security testing into CI/CD. (Tools / General-Purpose Machine Learning)

- awesome-ai-safety - promptfoo - LLM evaluation and red-teaming tool with safety-specific plugins for toxicity, PII, and jailbreak testing. (Red Teaming & Evaluation / Automated Red Teaming)

- Awesome-AI-Evaluation-Guide - Promptfoo - CLI tool for prompt testing with cost tracking and regression detection (Tools & Platforms / Open Source Frameworks)

- llmops - PromptFoo - Test and evaluate LLM outputs (What's New / 🆕 Recently Added (January 2026))

- awesome-testing - promptfoo - Open-source framework for testing and red teaming LLM applications. Compare prompts, test RAG architectures, run multi-turn adversarial attacks, and catch security vulnerabilities with CI/CD integration. (Software / AI & LLM Testing)

- awesome-prompts - **promptfoo** - square) | Test-driven prompt engineering: regression tests, red teaming, model comparison, CI/CD integration. [Acquired by OpenAI (Mar 2026)](https://openai.com/index/openai-to-acquire-promptfoo/) — remains open source. | (Frameworks / Eval & Testing)

- awesome-prompt-engineering - PromptFoo - Test and evaluate LLM outputs (Tools & Frameworks / Prompt Testing & Optimization)

- my-awesome - promptfoo/promptfoo - cd,cicd,evaluation,evaluation-framework,llm,llm-eval,llm-evaluation,llm-evaluation-framework,llmops,pentesting,prompt-engineering,prompt-testing,prompts,rag,red-teaming,testing,vulnerability-scanners pushed_at:2026-05 star:21.3k fork:1.8k Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration. Used by OpenAI and Anthropic. (TypeScript)

- awesome-learning - Promptfoo - Test your prompts, agents, and RAGs. AI Red teaming, pentesting, and vulnerability scanning for LLMs.

- fucking-awesome-machine-learning - promptfoo - Open-source LLM evaluation and red teaming framework. Test prompts, models, agents, and RAG pipelines. Run adversarial attacks (jailbreaks, prompt injection) and integrate security testing into CI/CD. (Tools / General-Purpose Machine Learning)

- awesome - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration. Used by OpenAI and Anthropic. (TypeScript)

- awesome-ai-cybersecurity - promptfoo - Open-source LLM red teaming and vulnerability scanner. 100+ attack types, 250k+ users. (Securing AI SaaS / Application Security)

- awesome-rainmana - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, DeepSeek, and more. Simple declarative configs with command line (TypeScript)

- awesome-harness-engineering - promptfoo/promptfoo

- awesome-ai - Promptfoo

- awesome-ai-eval - **Promptfoo** - Local-first CLI and dashboard for evaluating prompts, RAG flows, and agents with cost tracking and regression detection. (Tools / Evaluators and Test Harnesses)

- awesome-LLM-security - PromptFoo - A security testing framework for comprehensive red teaming, pentesting, and vulnerability scanning of LLMs. (⚔️ LLM And GenAI Security Testing Tools)

- awesome-llm-tools - PromptFoo - teaming prompts | Evaluation | (3. Prompt Optimization / Rust)

- awesome-production-agentic-systems - promptfoo - promptfoo is an LLM red teaming and evaluation framework for testing jailbreaks, prompt injection, and vulnerabilities with adversarial attacks and CI/CD integration. (Agent Security)

- awesome-agentic-ai - Promptfoo - Compare prompts, models, and configurations with reproducible tests. (Evaluation, Observability & Safety / Evaluation & Observability)

- awesome-hacking-lists - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command (TypeScript)

- awesome-agent-cortex - Promptfoo - Testing and evaluation framework for LLM prompts. (Prompt Engineering / Codex Resources)

- awesome-llm - Promptfoo - 开发者友好的 LLM 测试工具,用于评估 Prompt 质量和模型输出,防止回归。 (提示工程与优化 (Prompt Engineering) / 推理网关 (Inference Gateways))

- Awesome-AI-For-Security - promptfoo - Open-source LLM red teaming tool for finding and fixing vulnerabilities. 100+ attack types, 250k+ users. (Tools & Frameworks / Security Testing)

- awesome-llmops - PromptFoo

- awesome-langchain - Promptfoo

- awesome-ai-security - promptfoo - _Test your prompts, agents, and RAGs. Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration._ (Attack Techniques & Red Teaming / LLM & GenAI Red Teaming)

- awesome - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, DeepSeek, and more. Simple declarative configs with command line (TypeScript)

- Awesome-Hacking-Resources - Promptfoo - team + eval framework with 100+ attack types. (Table of Contents / 🤖 AI Security / AI Red Teaming)

- awesome - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, DeepSeek, and more. Simple declarative configs with command line and CI/CD integration. Used by OpenAI and Anthropic. (TypeScript)

- awesome-production-llm - promptfoo

- awesome-ai-coding-agent-tools - Promptfoo - Red-teaming and evaluation for prompts, agents, and RAG. (Supporting Infrastructure / Evaluation)

- jimsghstars - promptfoo/promptfoo - Test your prompts, agents, and RAGs. Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command (TypeScript)

- awesome-github-projects - promptfoo - Test your prompts, agents, and RAGs. Red teaming/pentesting/vulnerability scanning for AI. Compare performance of GPT, Claude, Gemini, DeepSeek, and more. Simple declarative configs with command line and CI/CD integration. Used by OpenAI and Anthropic. ⭐21,787 `TypeScript` 🔥 (🤖 AI & Machine Learning)

README

# Promptfoo: LLM evals & red teaming

[](https://npmjs.com/package/promptfoo)

[](https://npmjs.com/package/promptfoo)

[](https://github.com/promptfoo/promptfoo/actions/workflows/main.yml)

[](https://discord.gg/promptfoo)

`promptfoo` is a developer-friendly local tool for testing LLM applications. Stop the trial-and-error approach - start shipping secure, reliable AI apps.

## Quick Start

```sh

# Install and initialize project

npx promptfoo@latest init

# Run your first evaluation

npx promptfoo eval

```

See [Getting Started](https://www.promptfoo.dev/docs/getting-started/) (evals) or [Red Teaming](https://www.promptfoo.dev/docs/red-team/) (vulnerability scanning) for more.

## What can you do with Promptfoo?

- **Test your prompts and models** with [automated evaluations](https://www.promptfoo.dev/docs/getting-started/)

- **Secure your LLM apps** with [red teaming](https://www.promptfoo.dev/docs/red-team/) and vulnerability scanning

- **Compare models** side-by-side (OpenAI, Anthropic, Azure, Bedrock, Ollama, and [more](https://www.promptfoo.dev/docs/providers/))

- **Automate checks** in [CI/CD](https://www.promptfoo.dev/docs/integrations/ci-cd/)

- **Share results** with your team

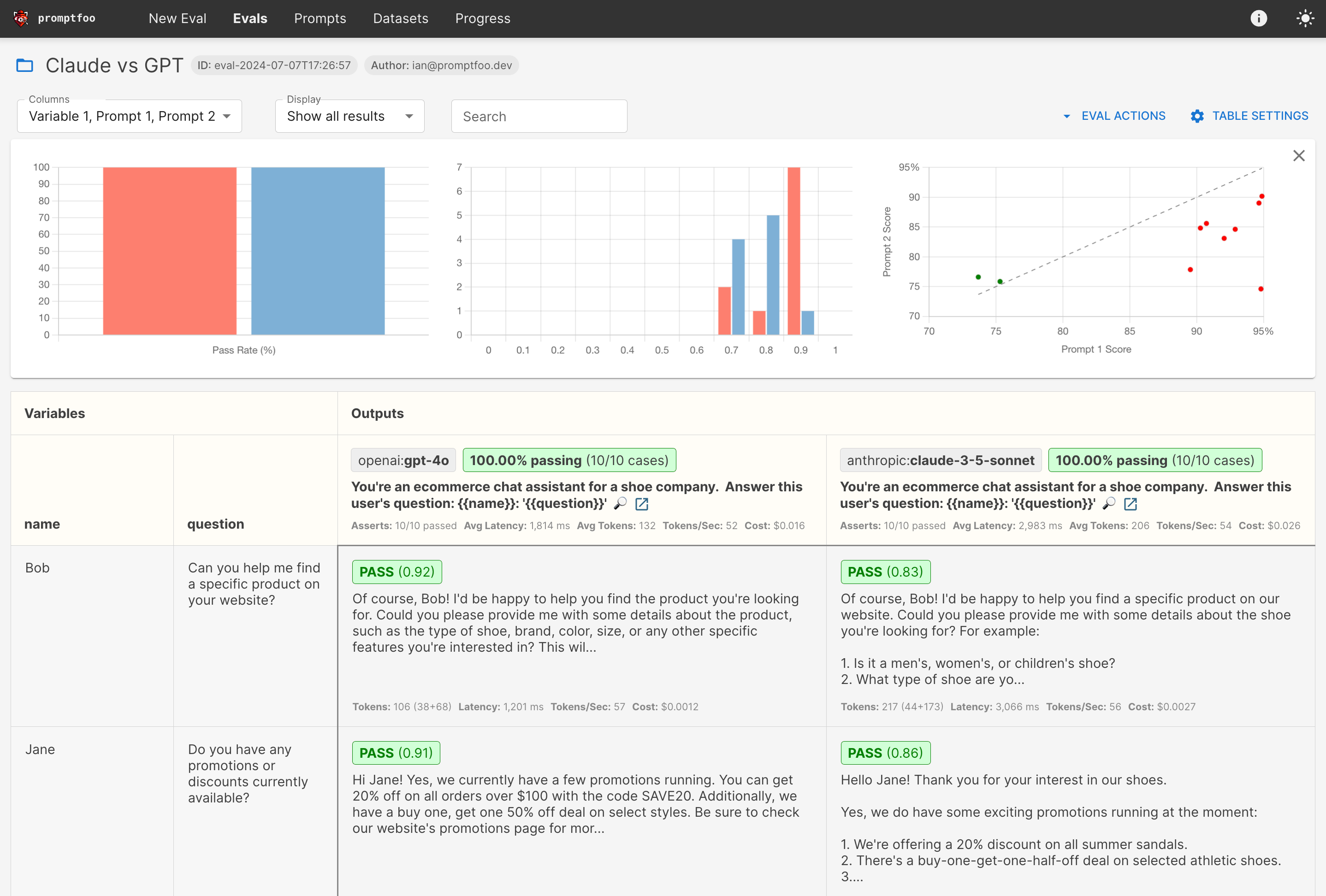

Here's what it looks like in action:

It works on the command line too:

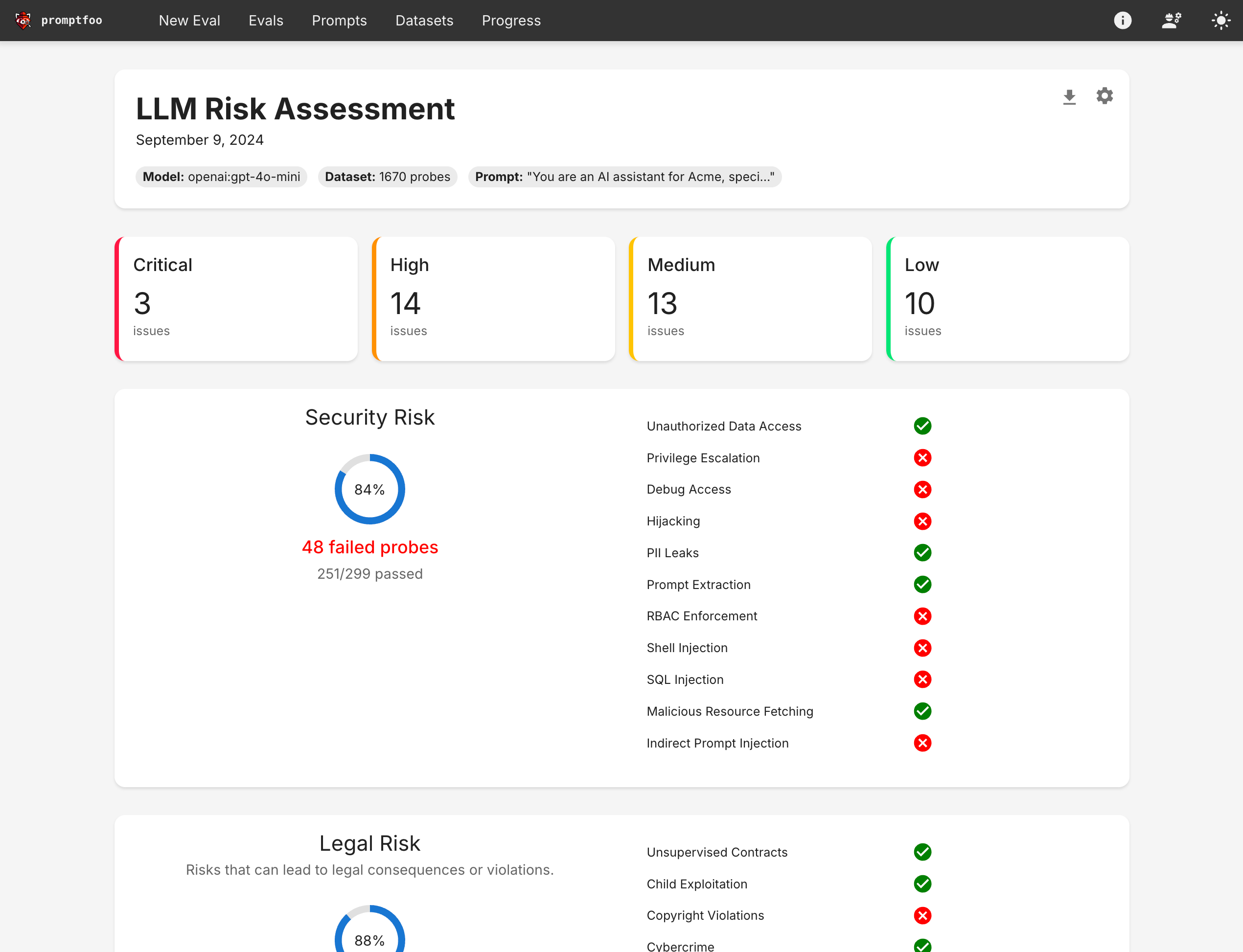

It also can generate [security vulnerability reports](https://www.promptfoo.dev/docs/red-team/):

## Why promptfoo?

- 🚀 **Developer-first**: Fast, with features like live reload and caching

- 🔒 **Private**: Runs 100% locally - your prompts never leave your machine

- 🔧 **Flexible**: Works with any LLM API or programming language

- 💪 **Battle-tested**: Powers LLM apps serving 10M+ users in production

- 📊 **Data-driven**: Make decisions based on metrics, not gut feel

- 🤝 **Open source**: MIT licensed, with an active community

## Learn More

- 📚 [Full Documentation](https://www.promptfoo.dev/docs/intro/)

- 🔐 [Red Teaming Guide](https://www.promptfoo.dev/docs/red-team/)

- 🎯 [Getting Started](https://www.promptfoo.dev/docs/getting-started/)

- 💻 [CLI Usage](https://www.promptfoo.dev/docs/usage/command-line/)

- 📦 [Node.js Package](https://www.promptfoo.dev/docs/usage/node-package/)

- 🤖 [Supported Models](https://www.promptfoo.dev/docs/providers/)

## Contributing

We welcome contributions! Check out our [contributing guide](https://www.promptfoo.dev/docs/contributing/) to get started.

Join our [Discord community](https://discord.gg/promptfoo) for help and discussion.