https://github.com/rupeshs/ovllm_node_addon

OpenVINO LLM Node.js C++ addon

https://github.com/rupeshs/ovllm_node_addon

addon cpp genai llm node-gyp nodejs openvino python tinyllama tinyllamachat

Last synced: 10 months ago

JSON representation

OpenVINO LLM Node.js C++ addon

- Host: GitHub

- URL: https://github.com/rupeshs/ovllm_node_addon

- Owner: rupeshs

- Created: 2024-07-07T14:58:14.000Z (almost 2 years ago)

- Default Branch: main

- Last Pushed: 2024-07-13T13:43:57.000Z (almost 2 years ago)

- Last Synced: 2024-07-13T14:52:39.184Z (almost 2 years ago)

- Topics: addon, cpp, genai, llm, node-gyp, nodejs, openvino, python, tinyllama, tinyllamachat

- Language: C++

- Homepage:

- Size: 1.13 MB

- Stars: 4

- Watchers: 2

- Forks: 0

- Open Issues: 0

-

Metadata Files:

- Readme: readme.md

Awesome Lists containing this project

README

# Node.js OpenVINO LLM C++ addon

This is a Node.js addon for [OpenVINO GenAI](https://github.com/openvinotoolkit/openvino.genai/) LLM.

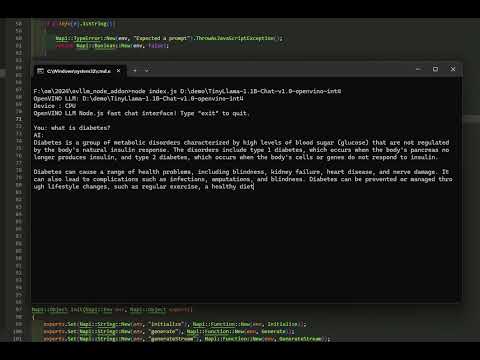

Tested using TinyLLama chat 1.1 OpenVINO int4 model on Windows 11 (Intel Core i7 CPU).

Watch below YouTube video for demo :

[](https://www.youtube.com/watch?v=dAk8rlFE3QE)

## Build

- Visual Studio 2022 (C++)

- Node 20.11 or higher

- node-gyp

- Python 3.11

- Used [openvino_genai_windows_2024.2.0.0_x86_64](https://docs.openvino.ai/2024/get-started/install-openvino.html?PACKAGE=OPENVINO_BASE&VERSION=v_2024_2_0&OP_SYSTEM=WINDOWS&DISTRIBUTION=ARCHIVE) release

Run the following commands to build:

```

npm install

node-gyp configure

node-gyp build

```

## Run

To test the Node.js OpenVINO LLM addon run the `index.js` script.

`node index.js D:/demo/TinyLlama-1.1B-Chat-v1.0-openvino-int4`

Disable streaming

`node index.js D:/demo/TinyLlama-1.1B-Chat-v1.0-openvino-int4 nostream`

## Supported models

Supported models are [here](https://github.com/openvinotoolkit/openvino.genai/blob/releases/2024/2/src/docs/SUPPORTED_MODELS.md)