https://github.com/scale3-labs/langtrace

Langtrace 🔍 is an open-source, Open Telemetry based end-to-end observability tool for LLM applications, providing real-time tracing, evaluations and metrics for popular LLMs, LLM frameworks, vectorDBs and more.. Integrate using Typescript, Python. 🚀💻📊

https://github.com/scale3-labs/langtrace

ai datasets evaluations gpt langchain llm llm-framework llmops observability open-source open-telemetry openai prompt-engineering tracing

Last synced: 12 months ago

JSON representation

Langtrace 🔍 is an open-source, Open Telemetry based end-to-end observability tool for LLM applications, providing real-time tracing, evaluations and metrics for popular LLMs, LLM frameworks, vectorDBs and more.. Integrate using Typescript, Python. 🚀💻📊

- Host: GitHub

- URL: https://github.com/scale3-labs/langtrace

- Owner: Scale3-Labs

- License: agpl-3.0

- Created: 2024-03-30T23:49:41.000Z (about 2 years ago)

- Default Branch: main

- Last Pushed: 2025-05-04T16:45:22.000Z (12 months ago)

- Last Synced: 2025-05-04T17:37:16.017Z (12 months ago)

- Topics: ai, datasets, evaluations, gpt, langchain, llm, llm-framework, llmops, observability, open-source, open-telemetry, openai, prompt-engineering, tracing

- Language: TypeScript

- Homepage: https://langtrace.ai

- Size: 3.69 MB

- Stars: 915

- Watchers: 13

- Forks: 90

- Open Issues: 3

-

Metadata Files:

- Readme: README.md

- Contributing: contributing.md

- License: LICENSE

- Security: SECURITY.md

Awesome Lists containing this project

- awesome-ChatGPT-repositories - langtrace - Langtrace 🔍 is an open-source, Open Telemetry based end-to-end observability tool for LLM applications, providing real-time tracing, evaluations and metrics for popular LLMs, LLM frameworks, vectorDBs and more.. Integrate using Typescript, Python. 🚀💻📊 (Prompts)

README

Langtrace

Open Source Observability for LLM Applications

[](LICENSE)

[](https://github.com/Scale3-Labs/langtrace)

[](https://github.com/Scale3-Labs/langtrace/pulls)

[](https://github.com/Scale3-Labs/langtrace-typescript-sdk)

[](https://www.npmjs.com/package/@langtrase/typescript-sdk)

[](https://github.com/Scale3-Labs/langtrace-python-sdk)

[](https://pepy.tech/project/langtrace-python-sdk)

[](https://static.pepy.tech/badge/langtrace-python-sdk)

[](https://railway.app/template/8dNq1c?referralCode=MA2S9H)

---

## 📚 Table of Contents

- [✨ Features](#-features)

- [🚀 Quick Start](#-quick-start)

- [🔗 Integrations](#-supported-integrations)

- [🌐 Getting Started](#-getting-started)

- [🏠 Self Hosting](#-langtrace-self-hosted)

- [📐 Architecture](#-langtrace-system-architecture)

- [🤝 Contributing](#-contributions)

- [🔒 Security](#-security)

- [❓ FAQ](#-frequently-asked-questions)

- [👥 Contributors](#-contributors)

- [📜 License](#-license)

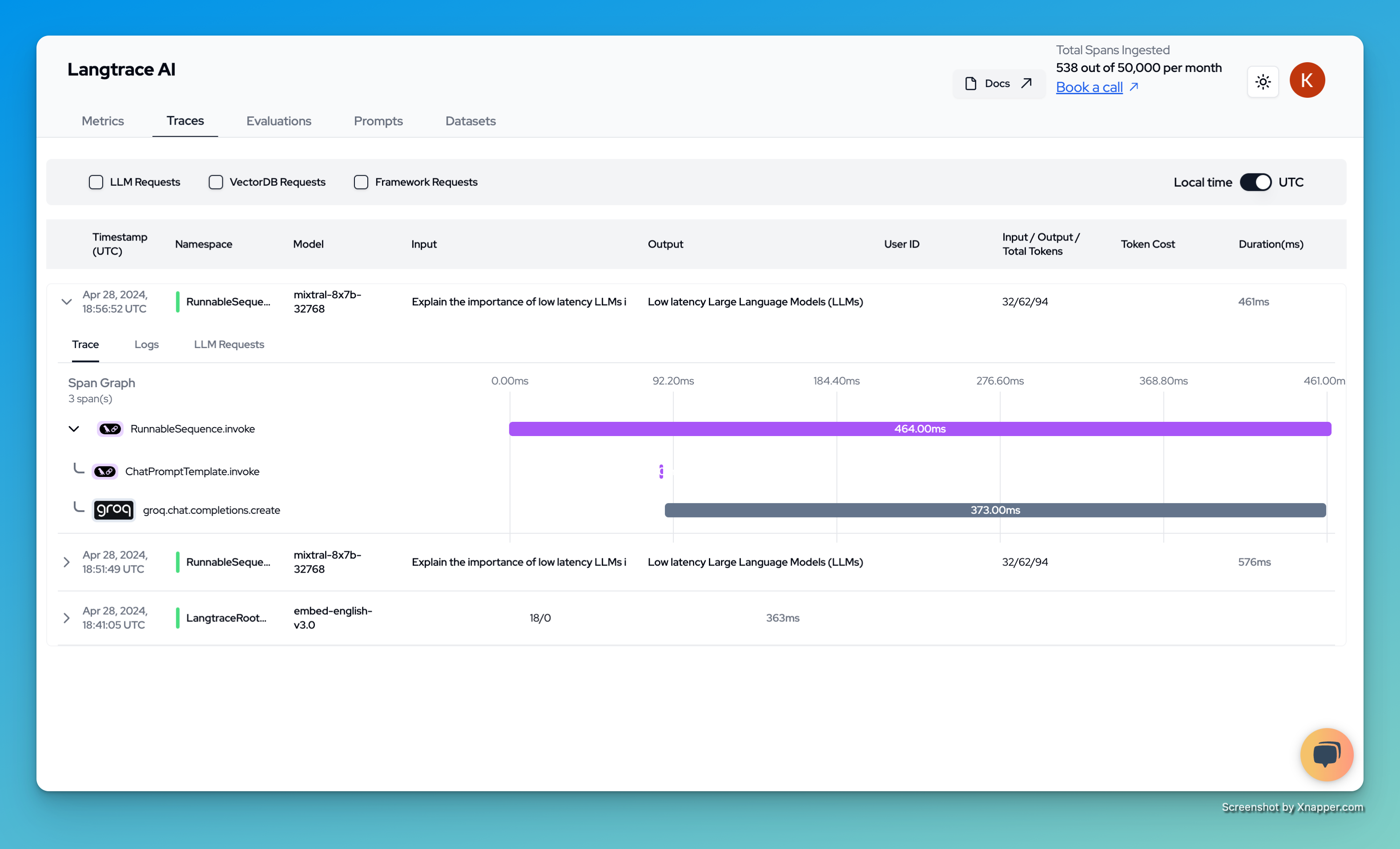

Langtrace is an open source observability software which lets you capture, debug and analyze traces and metrics from all your applications that leverages LLM APIs, Vector Databases and LLM based Frameworks.

## ✨ Features

- 📊 **Open Telemetry Support**: Built on OTEL standards for comprehensive tracing

- 🔄 **Real-time Monitoring**: Track LLM API calls, vector operations, and framework usage

- 🎯 **Performance Insights**: Analyze latency, costs, and usage patterns

- 🔍 **Debug Tools**: Trace and debug your LLM application workflows

- 📈 **Analytics**: Get detailed metrics and visualizations

- 🏠 **Self-hosting Option**: Deploy on your own infrastructure

## 🚀 Quick Start

```bash

# For TypeScript/JavaScript

npm i @langtrase/typescript-sdk

# For Python

pip install langtrace-python-sdk

```

Initialize in your code:

```typescript

// TypeScript

import * as Langtrace from '@langtrase/typescript-sdk'

Langtrace.init({ api_key: '' }) // Get your API key at langtrace.ai

```

```python

# Python

from langtrace_python_sdk import langtrace

langtrace.init(api_key='') # Get your API key at langtrace.ai

```

For detailed setup instructions, see [Getting Started](#-getting-started).

## 📊 Open Telemetry Support

The traces generated by Langtrace adhere to [Open Telemetry Standards(OTEL)](https://opentelemetry.io/docs/concepts/signals/traces/). We are developing [semantic conventions](https://opentelemetry.io/docs/concepts/semantic-conventions/) for the traces generated by this project. You can checkout the current definitions in [this repository](https://github.com/Scale3-Labs/langtrace-trace-attributes/tree/main/schemas). Note: This is an ongoing development and we encourage you to get involved and welcome your feedback.

---

## 📦 SDK Repositories

- [Langtrace Typescript SDK](https://github.com/Scale3-Labs/langtrace-typescript-sdk)

- [Langtrace Python SDK](https://github.com/Scale3-Labs/langtrace-python-sdk)

- [Semantic Span Attributes](https://github.com/Scale3-Labs/langtrace-trace-attributes)

---

## 🚀 Getting Started

### Langtrace Cloud ☁️

To use the managed SaaS version of Langtrace, follow the steps below:

1. Sign up by going to [this link](https://langtrace.ai).

2. Create a new Project after signing up. Projects are containers for storing traces and metrics generated by your application. If you have only one application, creating 1 project will do.

3. Generate an API key by going inside the project.

4. In your application, install the Langtrace SDK and initialize it with the API key you generated in the step 3.

5. The code for installing and setting up the SDK is shown below:

### If your application is built using typescript/javascript

```typescript

npm i @langtrase/typescript-sdk

```

```typescript

import * as Langtrace from '@langtrase/typescript-sdk' // Must precede any llm module imports

Langtrace.init({ api_key: })

```

OR

```typescript

import * as Langtrace from "@langtrase/typescript-sdk"; // Must precede any llm module imports

LangTrace.init(); // LANGTRACE_API_KEY as an ENVIRONMENT variable

```

### If your application is built using python

```python

pip install langtrace-python-sdk

```

```python

from langtrace_python_sdk import langtrace

langtrace.init(api_key=)

```

OR

```python

from langtrace_python_sdk import langtrace

langtrace.init() # LANGTRACE_API_KEY as an ENVIRONMENT variable

```

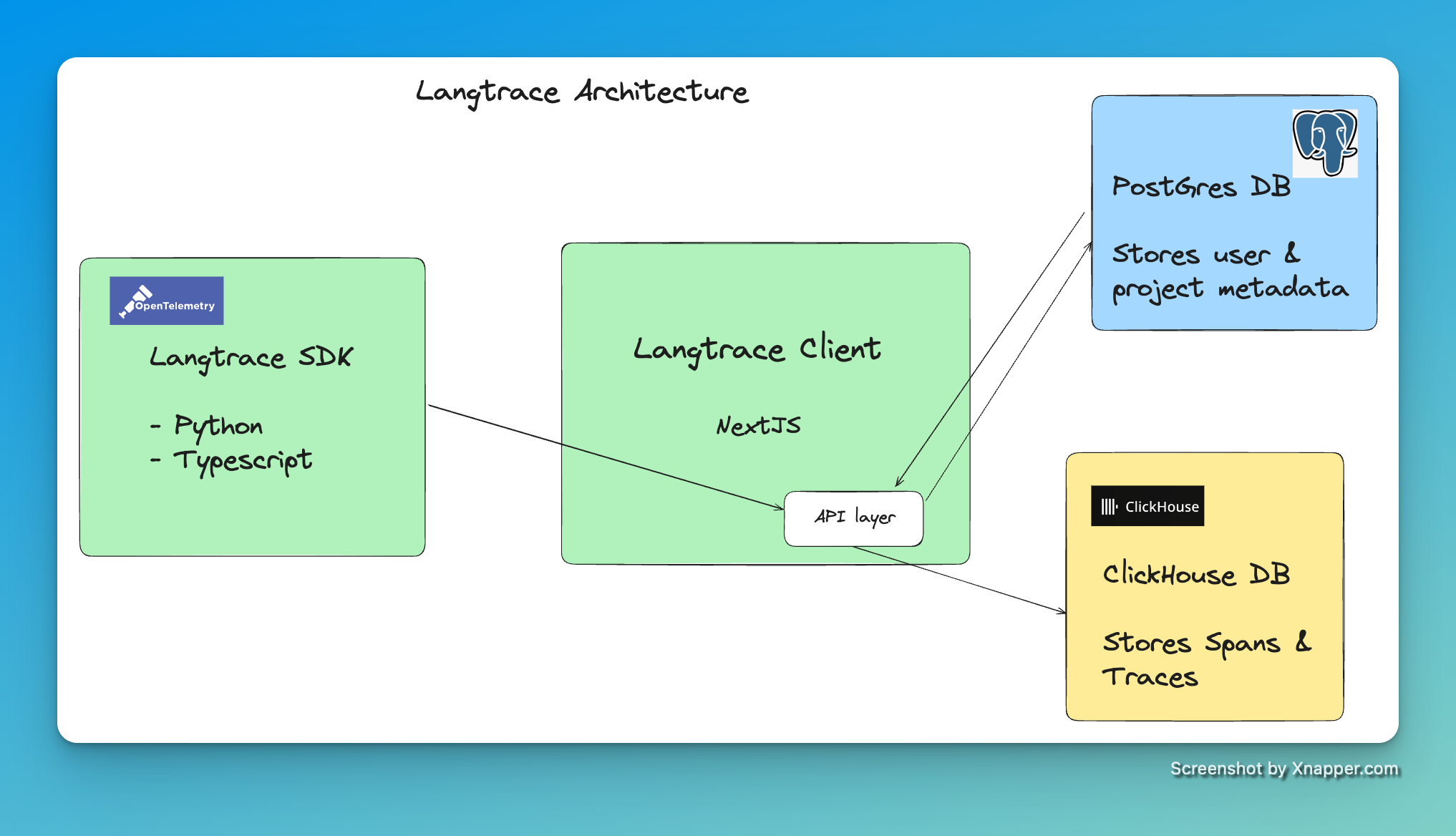

### 🏠 Langtrace self hosted

To run the Langtrace locally, you have to run three services:

- Next.js app

- Postgres database

- Clickhouse database

> [!IMPORTANT]

> Checkout our [documentation](https://docs.langtrace.ai/hosting/overview) for various deployment options and configurations.

Requirements:

- Docker

- Docker Compose

#### The .env file

Feel free to modify the `.env` file to suit your needs.

#### Starting the servers

```bash

docker compose up

```

The application will be available at `http://localhost:3000`.

#### Take down the setup

To delete containers and volumes

```bash

docker compose down -v

```

`-v` flag is used to delete volumes

## Telemetry

Langtrace does NOT collect any Telemetry if you are self hosting the OSS client. None of your data leaves your servers.

---

## 🔗 Supported Integrations

Langtrace automatically captures traces from the following vendors and frameworks:

### LLM Providers

| Provider | TypeScript SDK | Python SDK |

|----------|:-------------:|:----------:|

| OpenAI | ✅ | ✅ |

| Anthropic | ✅ | ✅ |

| Azure OpenAI | ✅ | ✅ |

| Cohere | ✅ | ✅ |

| DeepSeek | ✅ | ✅ |

| xAI | ✅ | ✅ |

| Groq | ✅ | ✅ |

| Perplexity | ✅ | ✅ |

| Gemini | ✅ | ✅ |

| AWS Bedrock | ✅ | ✅ |

| Mistral | ❌ | ✅ |

### LLM Frameworks

| Framework | TypeScript SDK | Python SDK |

|-----------|:-------------:|:----------:|

| Langchain | ❌ | ✅ |

| LlamaIndex | ✅ | ✅ |

| Langgraph | ❌ | ✅ |

| LiteLLM | ❌ | ✅ |

| DSPy | ❌ | ✅ |

| CrewAI | ❌ | ✅ |

| Ollama | ❌ | ✅ |

| VertexAI | ✅ | ✅ |

| Vercel AI | ✅ | ❌ |

| GuardrailsAI | ❌ | ✅ |

| Arch | ❌ | ✅ |

| Graphlit | ❌ | ✅ |

| Agno | ❌ | ✅ |

| Phidata | ❌ | ✅ |

| Cleanlab | ❌ | ✅ |

### Vector Databases

| Database | TypeScript SDK | Python SDK |

|----------|:-------------:|:----------:|

| Pinecone | ✅ | ✅ |

| ChromaDB | ✅ | ✅ |

| QDrant | ✅ | ✅ |

| Weaviate | ✅ | ✅ |

| PGVector | ✅ | ✅ (SQLAlchemy) |

| MongoDB | ❌ | ✅ |

| Milvus | ❌ | ✅ |

---

## 📐 Langtrace System Architecture

---

## 💡 Feature Requests and Issues

- To request for features, head over [here to start a discussion](https://github.com/Scale3-Labs/langtrace/discussions/categories/feature-requests).

- To raise an issue, head over [here and create an issue](https://github.com/Scale3-Labs/langtrace/issues).

---

## 🤝 Contributions

We welcome contributions to this project. To get started, fork this repository and start developing. To get involved, join our Slack workspace.

---

## 🌟 Langtrace Star History

## [](https://star-history.com/#Scale3-Labs/langtrace&Timeline)

---

## 🔒Security

To report security vulnerabilities, email us at . You can read more on security [here](https://github.com/Scale3-Labs/langtrace/blob/development/SECURITY.md).

---

## 📜 License

- Langtrace application(this repository) is [licensed](https://github.com/Scale3-Labs/langtrace/blob/development/LICENSE) under the AGPL 3.0 License. You can read about this license [here](https://www.gnu.org/licenses/agpl-3.0.en.html).

- Langtrace SDKs are licensed under the Apache 2.0 License. You can read about this license [here](https://www.apache.org/licenses/LICENSE-2.0).

## 👥 Contributors

karthikscale3

dylanzuber-scale3

darshit-s3

rohit-kadhe

yemiadej

alizenhom

obinnascale3

Cruppelt

Dnaynu

jatin9823

MayuriS24

NishantRana07

obinnaokafor

heysagnik

dabiras3

---

## ❓Frequently Asked Questions

**1. Can I self host and run Langtrace in my own cloud?**

Yes, you can absolutely do that. Follow the self hosting setup instructions in our [documentation](https://docs.langtrace.ai/hosting/overview).

**2. What is the pricing for Langtrace cloud?**

Currently, we are not charging anything for Langtrace cloud and we are primarily looking for feedback so we can continue to improve the project. We will inform our users when we decide to monetize it.

**3. What is the tech stack of Langtrace?**

Langtrace uses NextJS for the frontend and APIs. It uses PostgresDB as a metadata store and Clickhouse DB for storing spans, metrics, logs and traces.

**4. Can I contribute to this project?**

Absolutely! We love developers and welcome contributions. Get involved early by joining our [Discord Community](https://discord.langtrace.ai/).

**5. What skillset is required to contribute to this project?**

Programming Languages: Typescript and Python.

Framework knowledge: NextJS.

Database: Postgres and Prisma ORM.

Nice to haves: Opentelemetry instrumentation framework, experience with distributed tracing.