https://github.com/vocalpy/vak

A neural network framework for researchers studying acoustic communication

https://github.com/vocalpy/vak

animal-communication animal-vocalizations bioacoustic-analysis bioacoustics birdsong python python3 pytorch spectrograms speech-processing torch torchvision vocalizations

Last synced: about 1 year ago

JSON representation

A neural network framework for researchers studying acoustic communication

- Host: GitHub

- URL: https://github.com/vocalpy/vak

- Owner: vocalpy

- License: bsd-3-clause

- Created: 2019-03-03T11:34:38.000Z (about 7 years ago)

- Default Branch: main

- Last Pushed: 2025-01-16T00:58:41.000Z (over 1 year ago)

- Last Synced: 2025-02-14T06:11:27.222Z (over 1 year ago)

- Topics: animal-communication, animal-vocalizations, bioacoustic-analysis, bioacoustics, birdsong, python, python3, pytorch, spectrograms, speech-processing, torch, torchvision, vocalizations

- Language: Python

- Homepage: https://vak.readthedocs.io

- Size: 196 MB

- Stars: 80

- Watchers: 3

- Forks: 17

- Open Issues: 130

-

Metadata Files:

- Readme: README.md

- Contributing: .github/CONTRIBUTING.md

- License: LICENSE

- Code of conduct: CODE_OF_CONDUCT.md

- Citation: CITATION.cff

Awesome Lists containing this project

- awesome-acoustic - vak

- open-sustainable-technology - vak - A neural network framework for animal acoustic communication and bioacoustics. (Biosphere / Bioacoustics and Acoustic Data Analysis)

README

## A neural network framework for researchers studying acoustic communication

[](https://zenodo.org/badge/latestdoi/173566541)

[](#contributors-)

[](https://badge.fury.io/py/vak)

[](https://opensource.org/licenses/BSD-3-Clause)

[](https://github.com/vocalpy/vak/actions/workflows/ci-linux.yml/badge.svg)

[](https://codecov.io/gh/vocalpy/vak)

`vak` is a Python framework for neural network models,

designed for researchers studying acoustic communication:

how and why animals communicate with sound.

Many people will be familiar with work in this area on

animal vocalizations such as birdsong, bat calls, and even human speech.

Neural network models have provided a powerful new tool for researchers in this area,

as in many other fields.

The library has two main goals:

1. Make it easier for researchers studying acoustic communication to

apply neural network algorithms to their data

2. Provide a common framework that will facilitate benchmarking neural

network algorithms on tasks related to acoustic communication

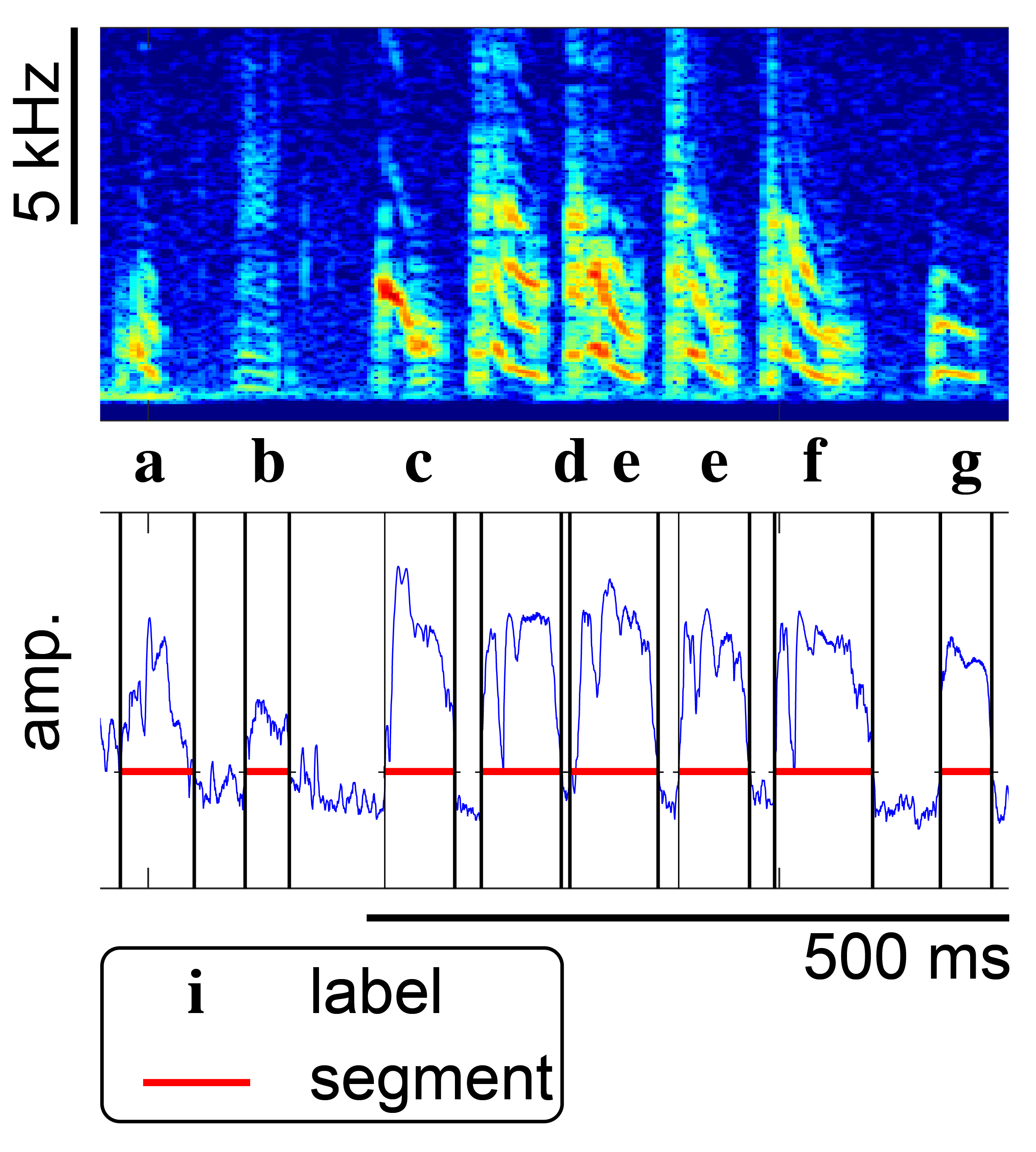

Currently, the main use is an automatic *annotation* of vocalizations and other animal sounds.

By *annotation*, we mean something like the example of annotated birdsong shown below:

You give `vak` training data in the form of audio or spectrogram files with annotations,

and then `vak` helps you train neural network models

and use the trained models to predict annotations for new files.

We developed `vak` to benchmark a neural network model we call [`tweetynet`](https://github.com/yardencsGitHub/tweetynet).

Please see the eLife article here: https://elifesciences.org/articles/63853

To learn more about the goals and design of vak,

please see this talk from the SciPy 2023 conference,

and the associated Proceedings paper

[here](https://conference.scipy.org/proceedings/scipy2023/pdfs/david_nicholson.pdf).

For more background on animal acoustic communication and deep learning,

and how these intersect with related fields like

computational ethology and neuroscience,

please see the ["About"](#About) section below.

### Installation

Short version:

#### with `pip`

```console

$ pip install vak

```

#### with `conda`

```console

$ conda install vak -c pytorch -c conda-forge

$ # ^ notice additional channel!

```

Notice that for `conda` you specify two channels,

and that the `pytorch` channel should come first,

so it takes priority when installing the dependencies `pytorch` and `torchvision`.

For more details, please see:

https://vak.readthedocs.io/en/latest/get_started/installation.html

We test `vak` on Ubuntu and MacOS. We have run on Windows and

know of other users successfully running `vak` on that operating system,

but installation on Windows may require some troubleshooting.

A good place to start is by searching the [issues](https://github.com/vocalpy/vak/issues).

### Usage

#### Tutorial

Currently the easiest way to work with `vak` is through the command line.

You run it with configuration files, using one of a handful of commands.

For more details, please see the "autoannotate" tutorial here:

https://vak.readthedocs.io/en/latest/get_started/autoannotate.html

#### How can I use my data with `vak`?

Please see the How-To Guides in the documentation here:

https://vak.readthedocs.io/en/latest/howto/index.html

### Support / Contributing

For help, please begin by checking out the Frequently Asked Questions:

https://vak.readthedocs.io/en/latest/faq.html.

To ask a question about vak, discuss its development,

or share how you are using it,

please start a new "Q&A" topic on the VocalPy forum

with the vak tag:

To report a bug, or to request a feature,

please use the issue tracker on GitHub:

For a guide on how you can contribute to `vak`, please see:

https://vak.readthedocs.io/en/latest/development/index.html

### Citation

If you use vak for a publication, please cite both the Proceedings paper and the software.

#### Proceedings paper (BiBTex)

```

@inproceedings{nicholson2023vak,

title={vak: a neural network framework for researchers studying animal acoustic communication},

author={Nicholson, David and Cohen, Yarden},

booktitle={Python in Science Conference},

pages={59--67},

year={2023}

}

```

#### Software

[](https://zenodo.org/badge/latestdoi/173566541)

### License

[](https://opensource.org/licenses/BSD-3-Clause)

is [here](./LICENSE).

### About

Are humans unique among animals?

We speak languages, but is speech somehow like other animal behaviors, such as birdsong?

Questions like these are answered by studying how animals communicate with sound.

This research requires cutting edge computational methods and big team science across a wide range of disciplines,

including ecology, ethology, bioacoustics, psychology, neuroscience, linguistics, and genomics [^1][^2][^3].

As in many other domains, this research is being revolutionized by deep learning algorithms [^1][^2][^3].

Deep neural network models enable answering questions that were previously impossible to address,

in part because these models automate analysis of very large datasets.

Within the study of animal acoustic communication, multiple models have been proposed for similar tasks,

often implemented as research code with different libraries, such as Keras and Pytorch.

This situation has created a real need for a framework that allows researchers to easily benchmark models

and apply trained models to their own data. To address this need, we developed vak.

We originally developed vak to benchmark a neural network model, TweetyNet [^4][^5],

that automates annotation of birdsong by segmenting spectrograms.

TweetyNet and vak have been used in both neuroscience [^6][^7][^8] and bioacoustics [^9].

For additional background and papers that have used `vak`,

please see: https://vak.readthedocs.io/en/latest/reference/about.html

[^1]: https://www.frontiersin.org/articles/10.3389/fnbeh.2021.811737/full

[^2]: https://peerj.com/articles/13152/

[^3]: https://www.jneurosci.org/content/42/45/8514

[^4]: https://elifesciences.org/articles/63853

[^5]: https://github.com/yardencsGitHub/tweetynet

[^6]: https://www.nature.com/articles/s41586-020-2397-3

[^7]: https://elifesciences.org/articles/67855

[^8]: https://elifesciences.org/articles/75691

[^9]: https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0278522

#### "Why this name, vak?"

It has only three letters, so it is quick to type,

and it wasn't taken on [pypi](https://pypi.org/) yet.

Also I guess it has [something to do with speech](https://en.wikipedia.org/wiki/V%C4%81c).

"vak" rhymes with "squawk" and "talk".

#### Does your library have any poems?

[Yes.](https://vak.readthedocs.io/en/latest/poems/index.html)

## Contributors ✨

Thanks goes to these wonderful people ([emoji key](https://allcontributors.org/docs/en/emoji-key)):

avanikop

🐛

Luke Poeppel

📖

yardencsGitHub

💻 🤔 📢 📓 💬

David Nicholson

🐛 💻 🔣 📖 💡 🤔 🚇 🚧 🧑🏫 📆 👀 💬 📢 ⚠️ ✅

marichard123

📖

Therese Koch

📖 🐛

alyndanoel

🤔

adamfishbein

📖

vivinastase

🐛 📓 🤔

kaiyaprovost

💻 🤔

ymk12345

🐛 📖

neuronalX

🐛 📖

Khoa

📖

sthaar

📖 🐛 🤔

yangzheng-121

🐛 🤔

lmpascual

📖

ItamarFruchter

📖

Hjalmar K. Turesson

🐛 🤔

nhoglen

🐛

Ja-sonYun

💻

Jacqueline

🐛

Mark Muldoon

🐛

zhileiz1992

🐛 💻

Maris Basha

🤔 💻

Daniel Müller-Komorowska

📖

meriablue

📖

Henri Combrink

🐛

milaXT

🐛

This project follows the [all-contributors](https://github.com/all-contributors/all-contributors) specification. Contributions of any kind welcome!